You are an AI assistant for the Blue Language project.

Answer questions about Blue Language based on the provided documentation context.

If the answer is not in the context, say that you don't know rather than making up information.

Keep answers concise and helpful.

DOCUMENTATION CONTEXT:

====================

DOCUMENT: Introduction

CATEGORY: root

URL: https://language.blue/docs/introduction

====================

# Introduction

Your AI assistant can already search, compare prices, and fill shopping carts. But you'd never give it your credit card because there's no way to set safe boundaries.

**Blue** unlocks safe autonomy at machine speed: it lets every participant - human or AI - specify **exactly** what's permitted, verify **who** initiated each action, and rely on trusted processors to enforce the rules.

## 1. The big idea (why Blue feels familiar)

When two people strike a deal we …

1. **Talk it through** in a shared [language](./language/introduction).

2. **Write it down** so everyone can see the terms and track how the deal is unfolding.

3. **Bring in trusted parties** - escrow, card network, courier - when the stakes feel risky.

4. **Act and observe** - we do our part while watching others do theirs.

Blue gives software the same four steps:

| Human habit | Blue feature |

|---------------------|----------------------------------------------------------------------------------------------------------------------------------------------|

| Shared words | **Full-sentence [vocabulary](./language/blue-id).** Forget stubby API nouns; describe intent the way you'd explain it to a person. |

| Written contract | **Self-contained [document](./contracts/introduction).** Facts *plus* deterministic rules any Blue processor can run. |

| Trusted 3rd parties | **Named participants** (Stripe, DHL) who participate in the interaction by following the agreed [rules](./contracts/sequential-workflow). |

| Verifiable activity | **Personal signed [timelines](./timelines/introduction).** Each party publishes their actions to their own timeline while observing others'. |

> **Participants** are actors named in the document (e.g. Alice, Bob, Stripe).

> **Processors** are whichever engines they choose - [MyOS](./myos/why-myos) in the cloud, a CLI binary, or tomorrow's phone app - that read the document **plus** the timelines and converge independently.

## 2. Example Story — *"I want a painless refund"*

### 2.1. The problem

Alice likes Bob's \$120 video-course but has never heard of him. She'll buy only if a refund is automatic for the first week. Bob is happy—provided everything runs itself.

### 2.2. Bob's Blue solution

:::info Order document — participants: Alice, Bob

- If Alice accepts the course, Bob provides an embedded payment document

- If payment is refunded, course access is immediately revoked

:::

:::info Stripe-payment document — participants: Alice, Bob, Stripe

- **Capture** $120 the moment Alice pays.

- For the next **7 days** Alice may post `RequestRefund`.

- On that event **Stripe refunds the full amount** automatically—no Bob approval.

- Bob can still hit the API and refund on his own.

:::

One link, one language, one contract - each party processes it on whichever engine they already trust.

### 2.3. Why Alice clicks **Buy**

* **She trusts Stripe, not Bob.** She interacts directly with Stripe and knows her refund right is enforced.

* **Crystal checkout copy.** Stripe can show *"Instant refund within 7 days"* because it reads that promise straight from Bob's document.

* **Self-service.** Alice can click *Refund* **or** drop `RequestRefund` onto her timeline; either path works.

### 2.4. How the week unfolds

| Day | Timeline entry | Outcome (seen by every processor) |

|-----|----------------|-----------------------------------|

| 0 | `AuthorisePayment` (Alice) → `PaymentCaptured` (Stripe) | Course unlocked; refund window ticking |

| 5 | `RequestRefund` (Alice) | Stripe refunds \$120; Bob's dashboard logs `PaymentRefunded`; Order flips to **Cancelled** |

| 8 | *(none)* | A late request is ignored - rule expired |

No emails, no screenshots, no chargebacks: the timelines settle it.

## Why This Works: Email + Blockchain, Without the Baggage

Think of Blue as combining the best of both worlds:

- **Like email**: You control your own timeline, share what you want, maintain privacy

- **Like blockchain**: Everyone can verify what happened, but without expensive global consensus

- **Unlike both**: Documents contain executable rules, not just static data

Alice's refund worked because her timeline proved her request, Stripe's timeline proved the refund, and the document's rules guaranteed the outcome.

## 3. What this buys you

* **No new backbone** — run [MyOS](./myos/why-myos), a [Java local app](https://github.com/bluecontract/blue-language-java), or a [Node.js Lambda](https://github.com/bluecontract/blue-js); everyone reaches the same verdict.

* **Infinite scalability** — every party processes only the timelines named in its contract, so there's **no global ledger to bottleneck or shard**. One deal or a million run with the same architecture.

* **No schema-sync calls** — reference any concept; the [BlueId](./language/blue-id) proves its meaning.

* **No invisible behaviour** — the only path to action is a [timeline](./timelines/introduction) everyone can inspect.

* **Plug-and-play trust** — drop in a bank, insurer, or sensor as a named participant; they enforce their slice and earn a fee without new code or schema mapping.

## 4. Why Blue ≠ blockchain / DLT

* **Personal timelines, not global consensus.** Like email, you control your own timeline and share selectively. Unlike blockchain, there's no expensive global ledger to maintain or expensive mining to secure.

* **Document-focused verification.** Processors only need timelines from participants named in a specific document—not the entire network's history.

* **Privacy by design.** Alice's `RequestRefund` is visible to Stripe but not necessarily to anyone else—no public blocks exposing every transaction.

* **Efficiency without compromise.** All processors reach the same conclusion, but without mining, global consensus, or network-wide synchronization—just verified timelines from the specific parties involved.

## 5. Where this heads next

### A. Trust Distribution Made Simple

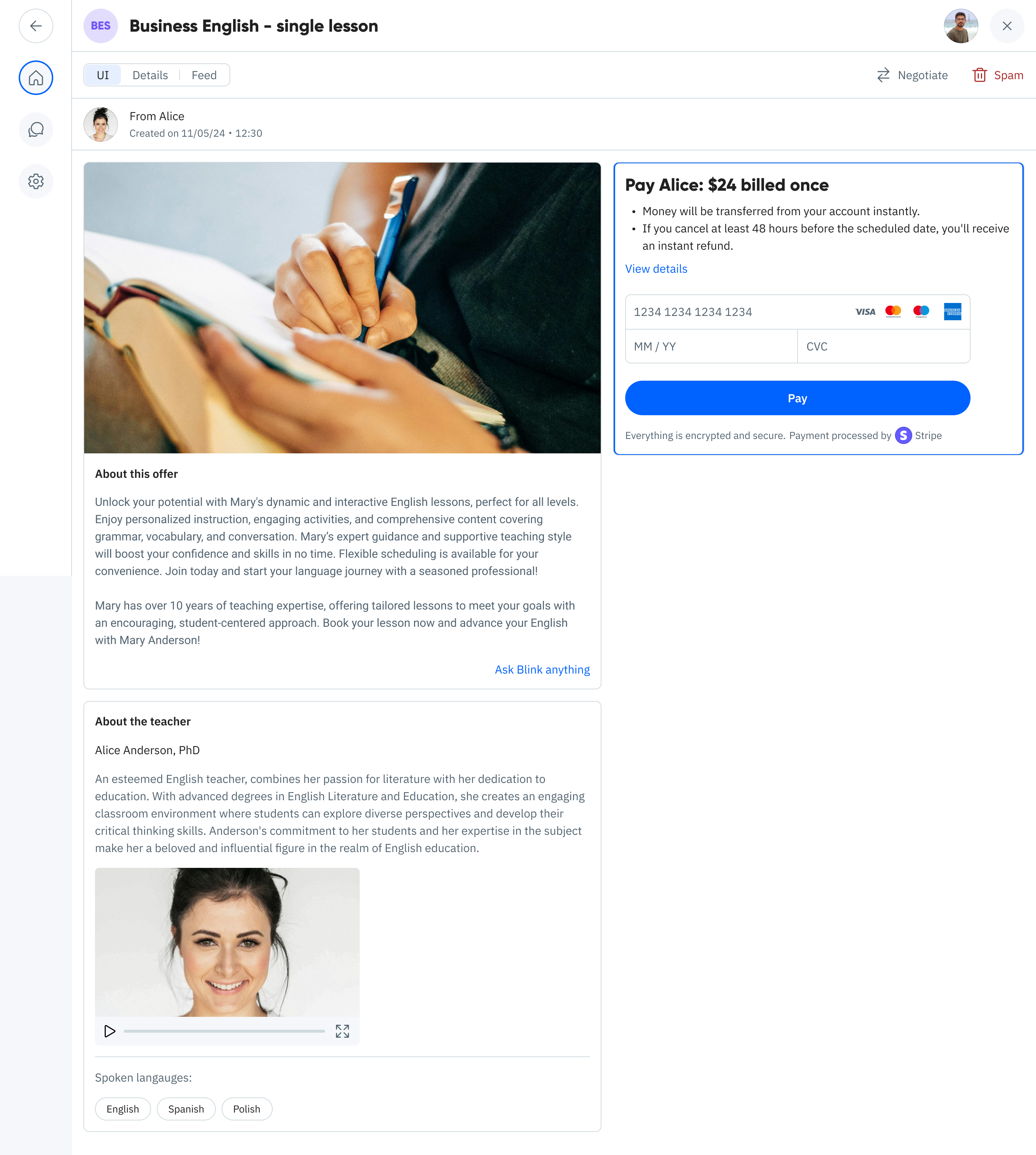

Create a focused Blue doc with **only the parties who matter**—your bank for [payments](./payments/single-payment) or [payment plans](./payments/payment-plan), your carrier for delivery, your insurer for edge cases—and embed it in the main deal.

Each participant enforces its slice, appends signed events, and **earns a fee for the assurance it provides**. Trust becomes modular, verifiable, and profitable.

### B. The Collaboration Economy

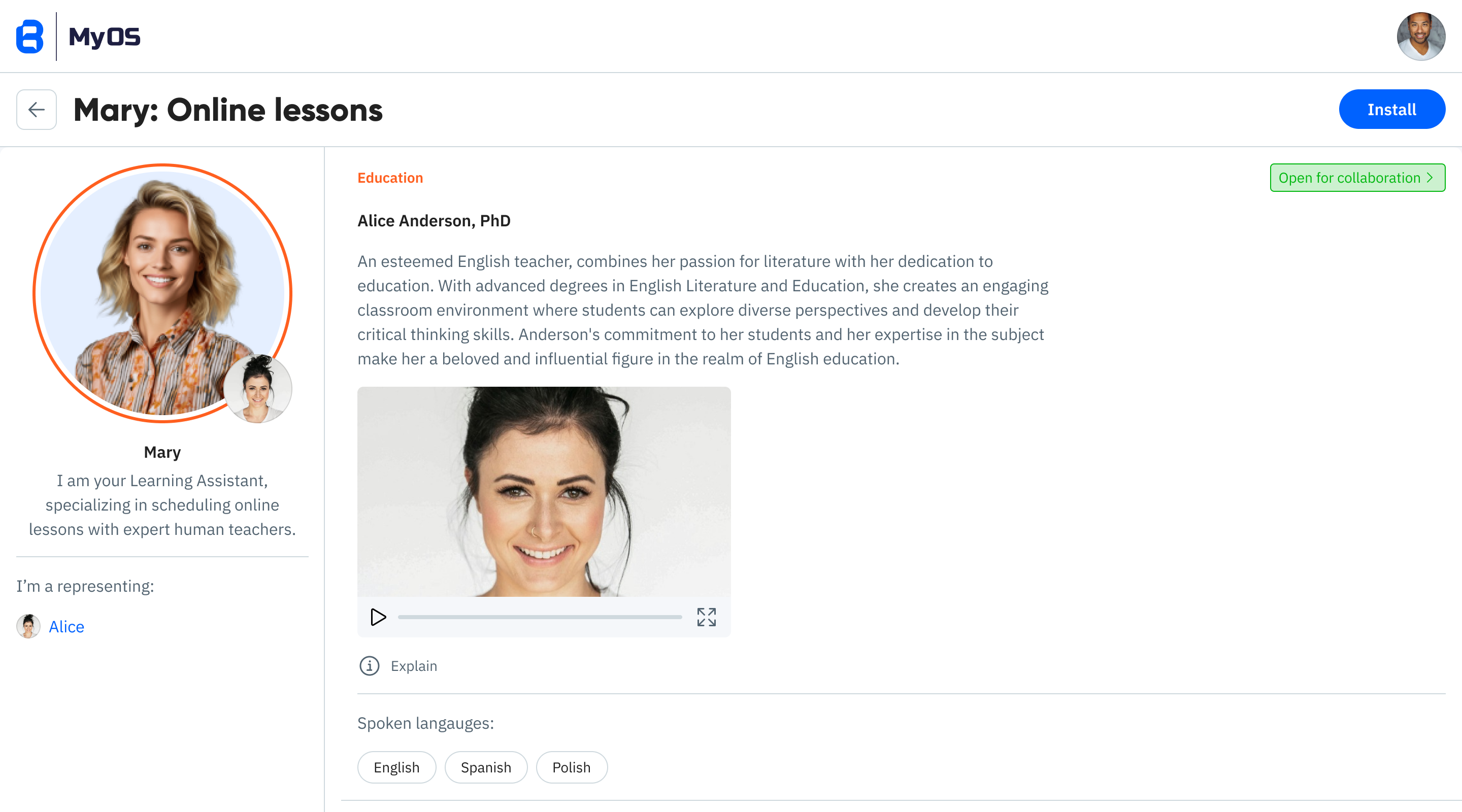

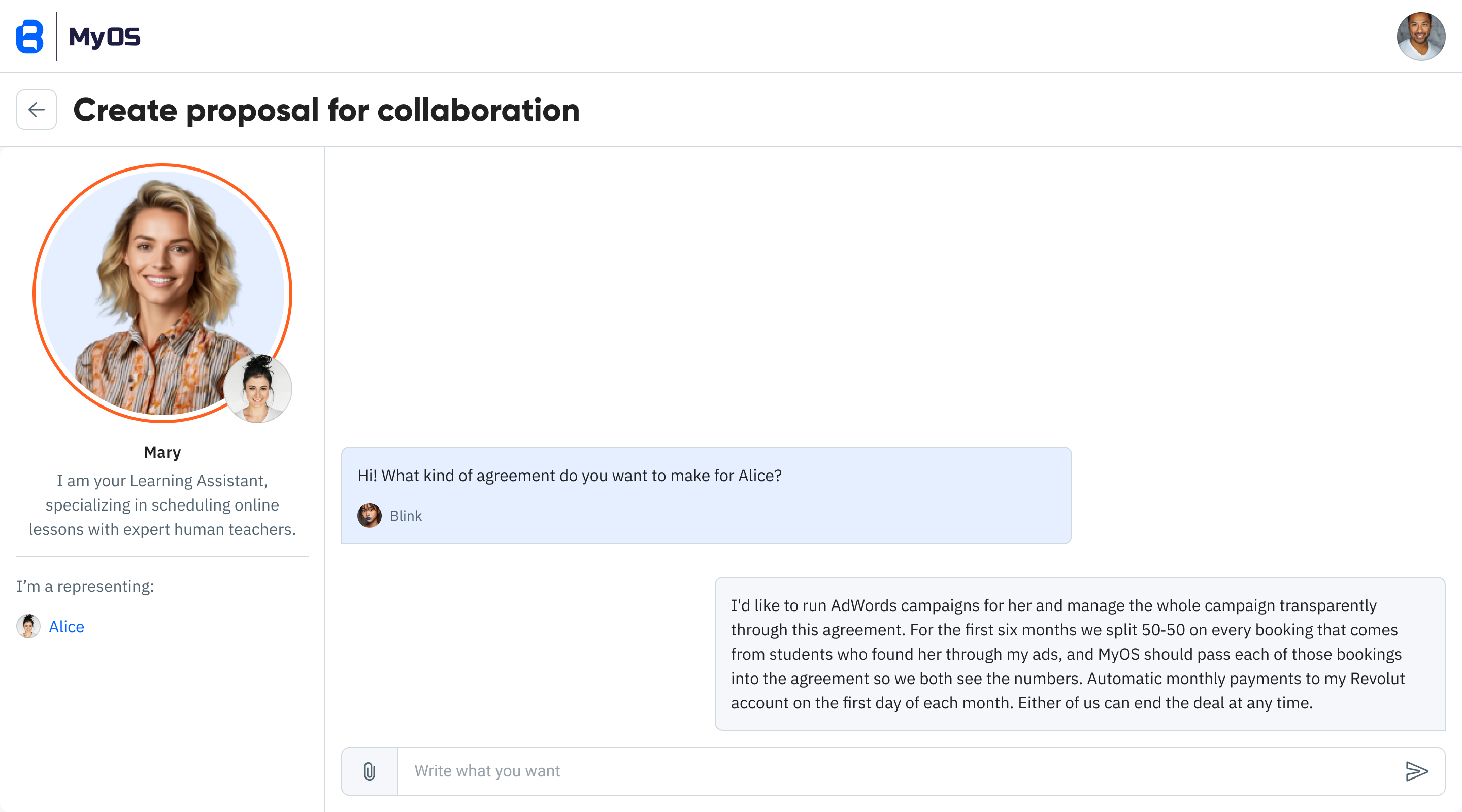

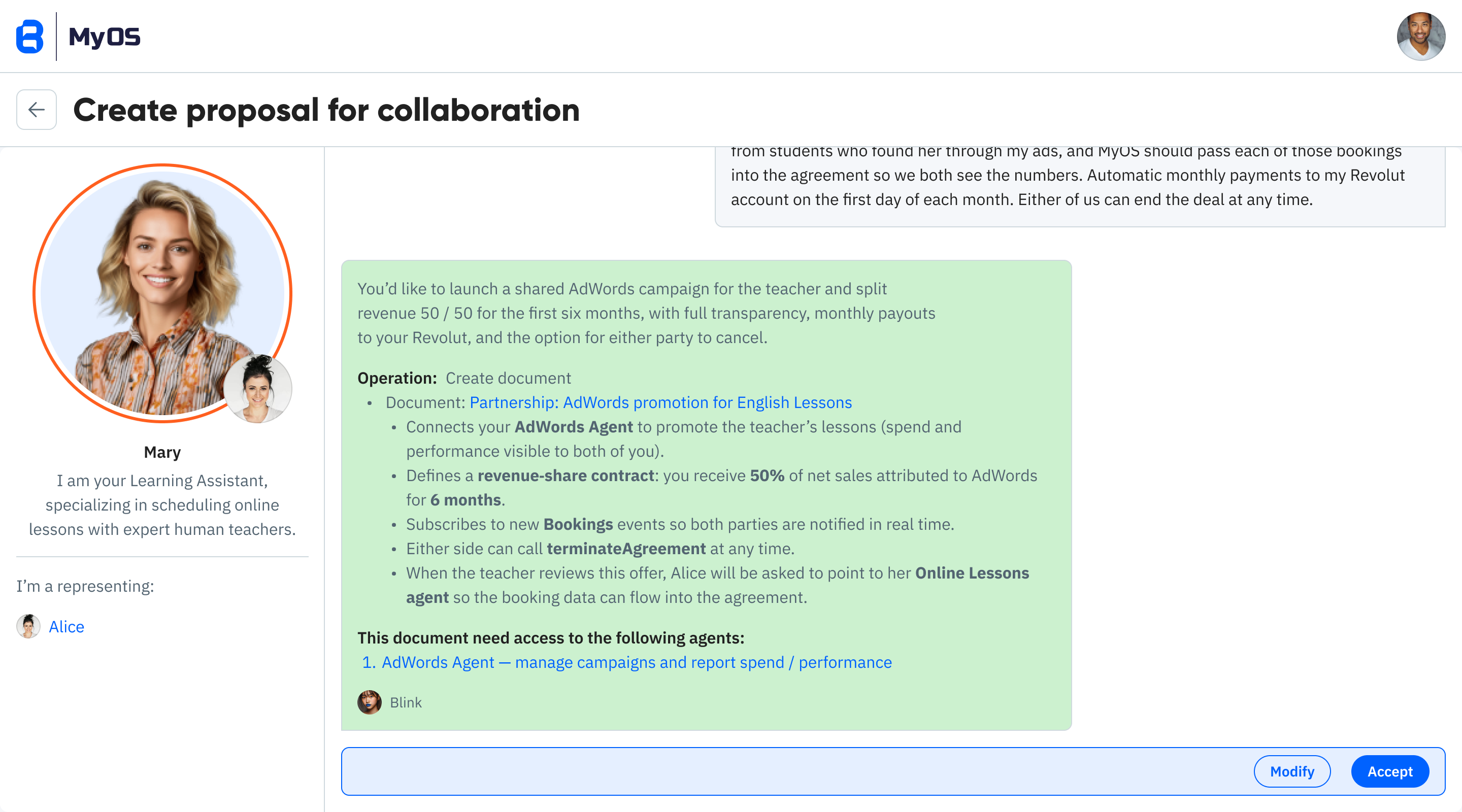

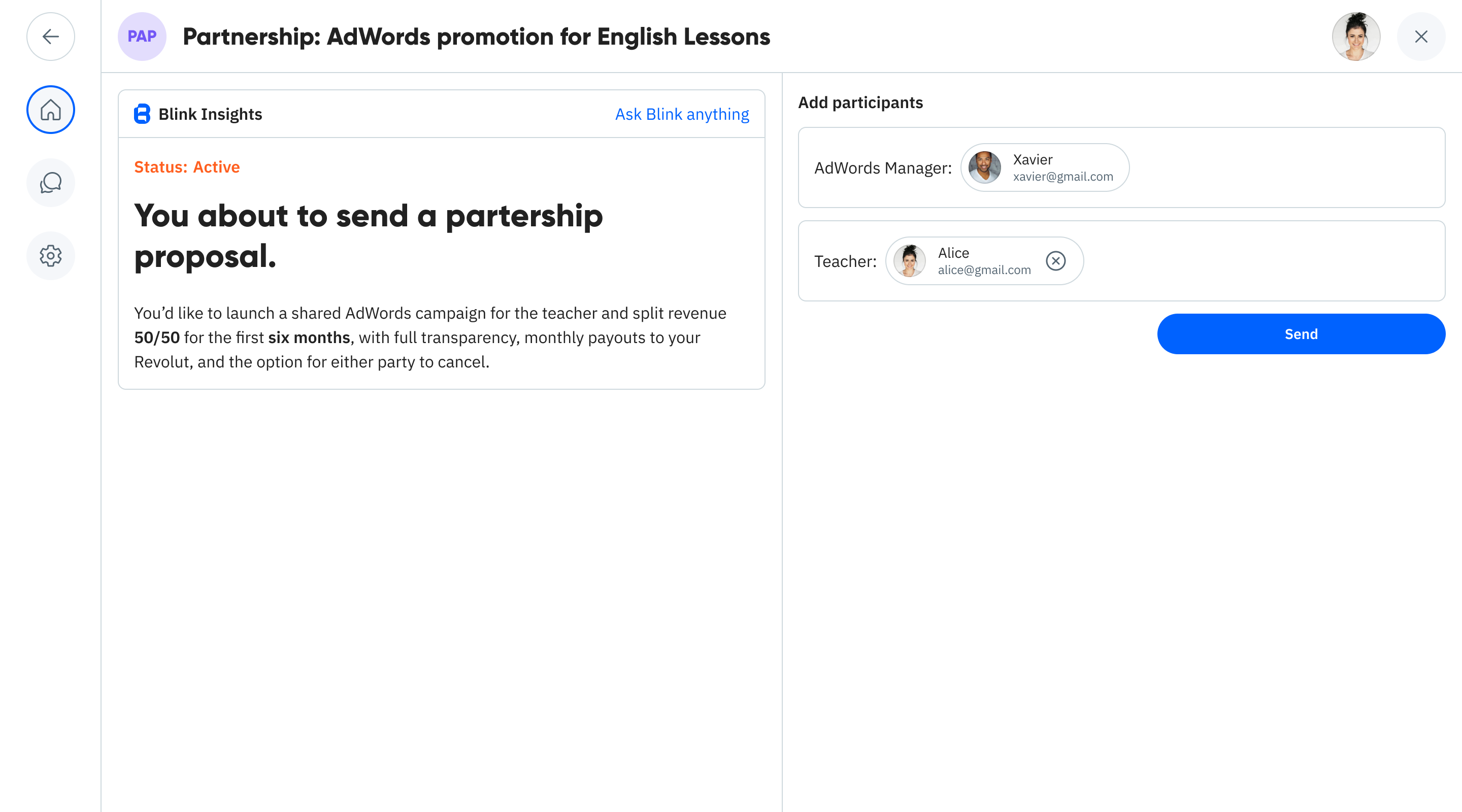

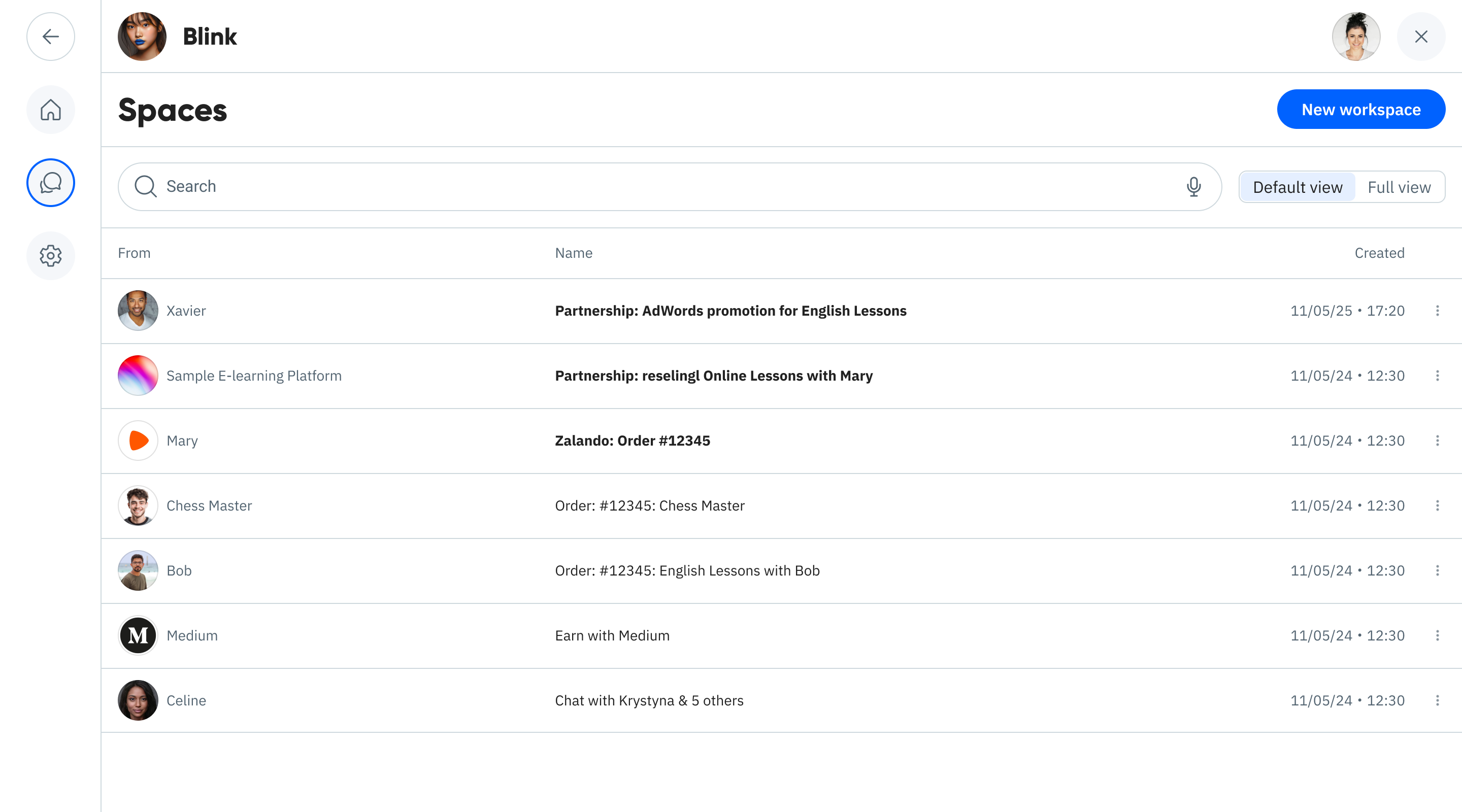

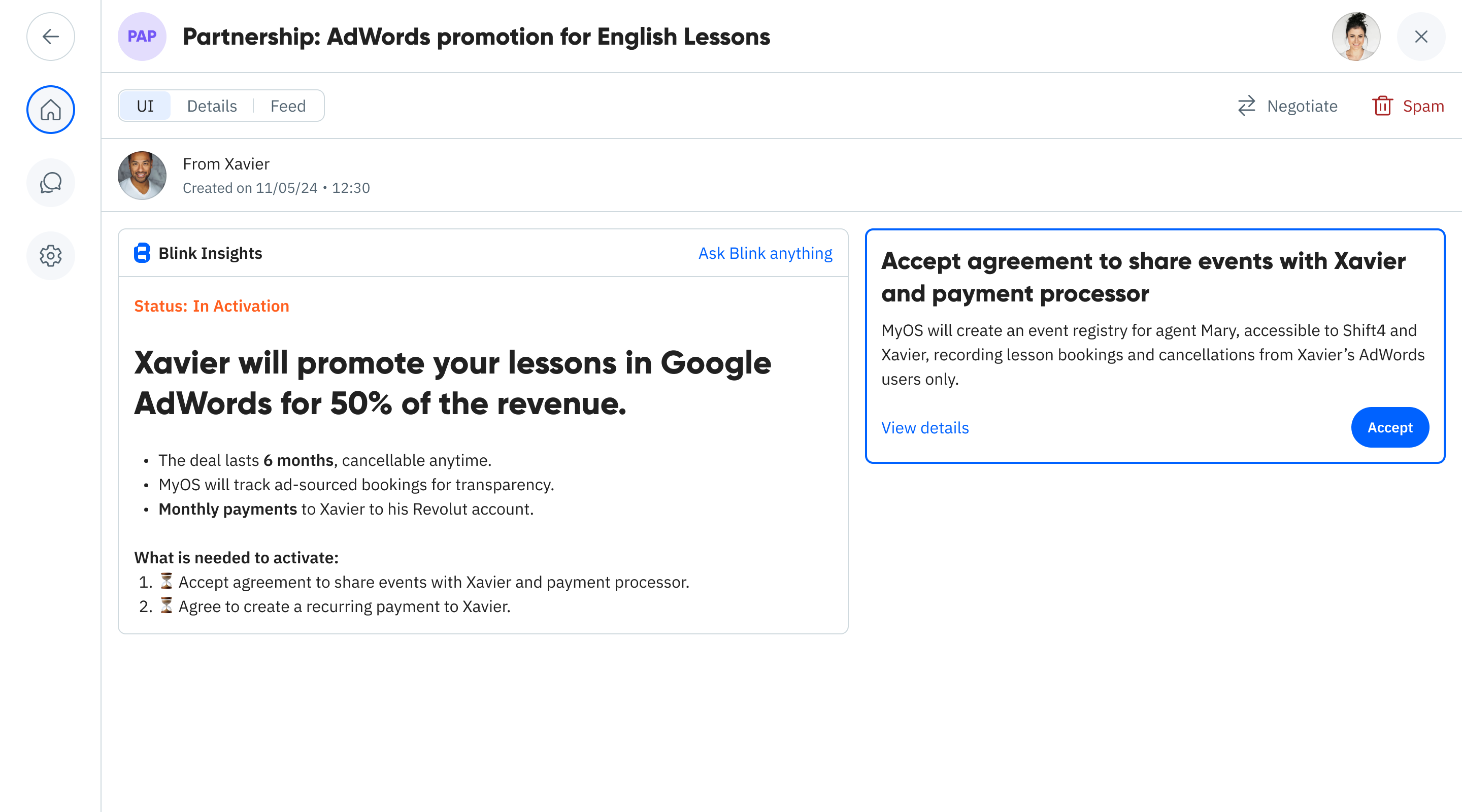

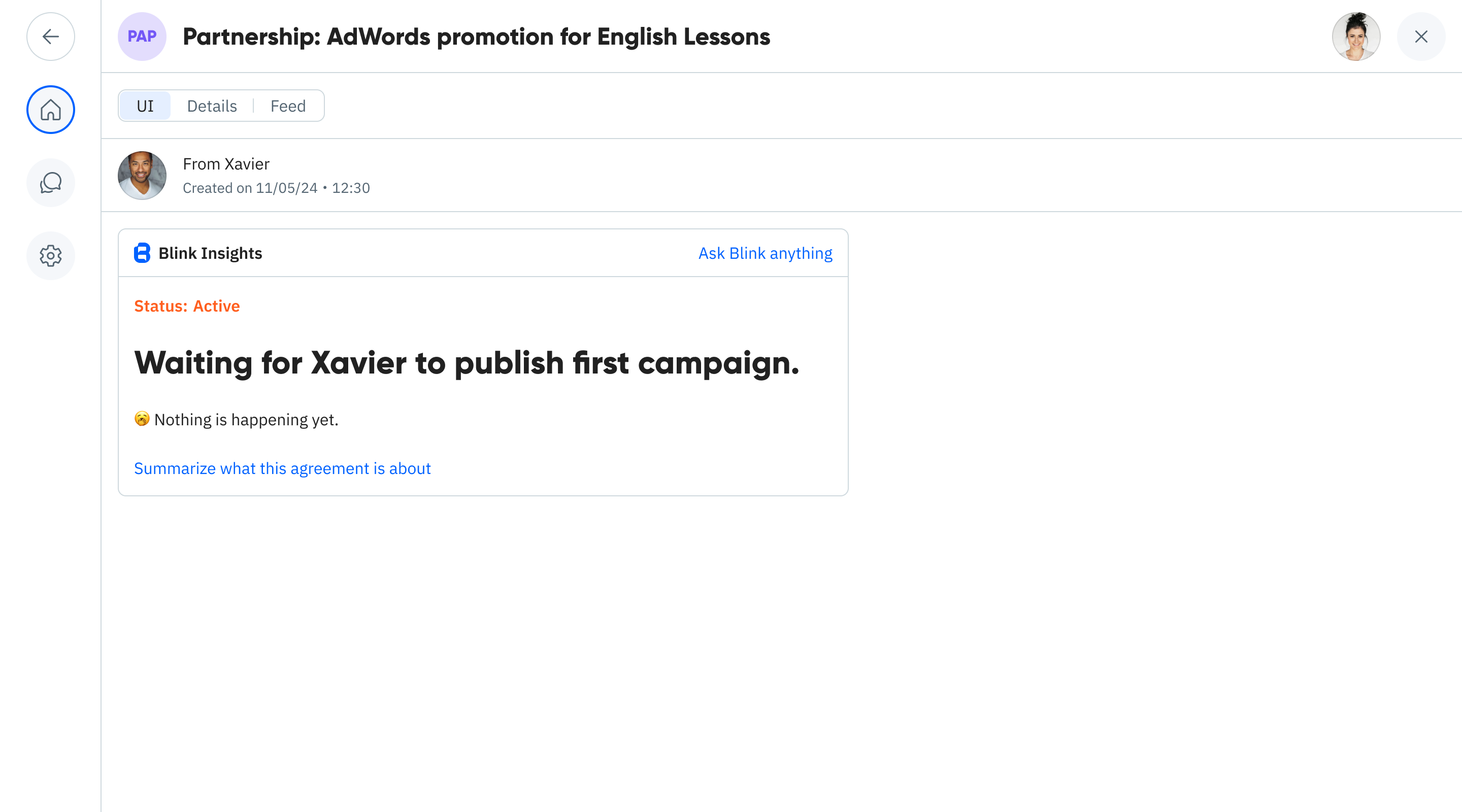

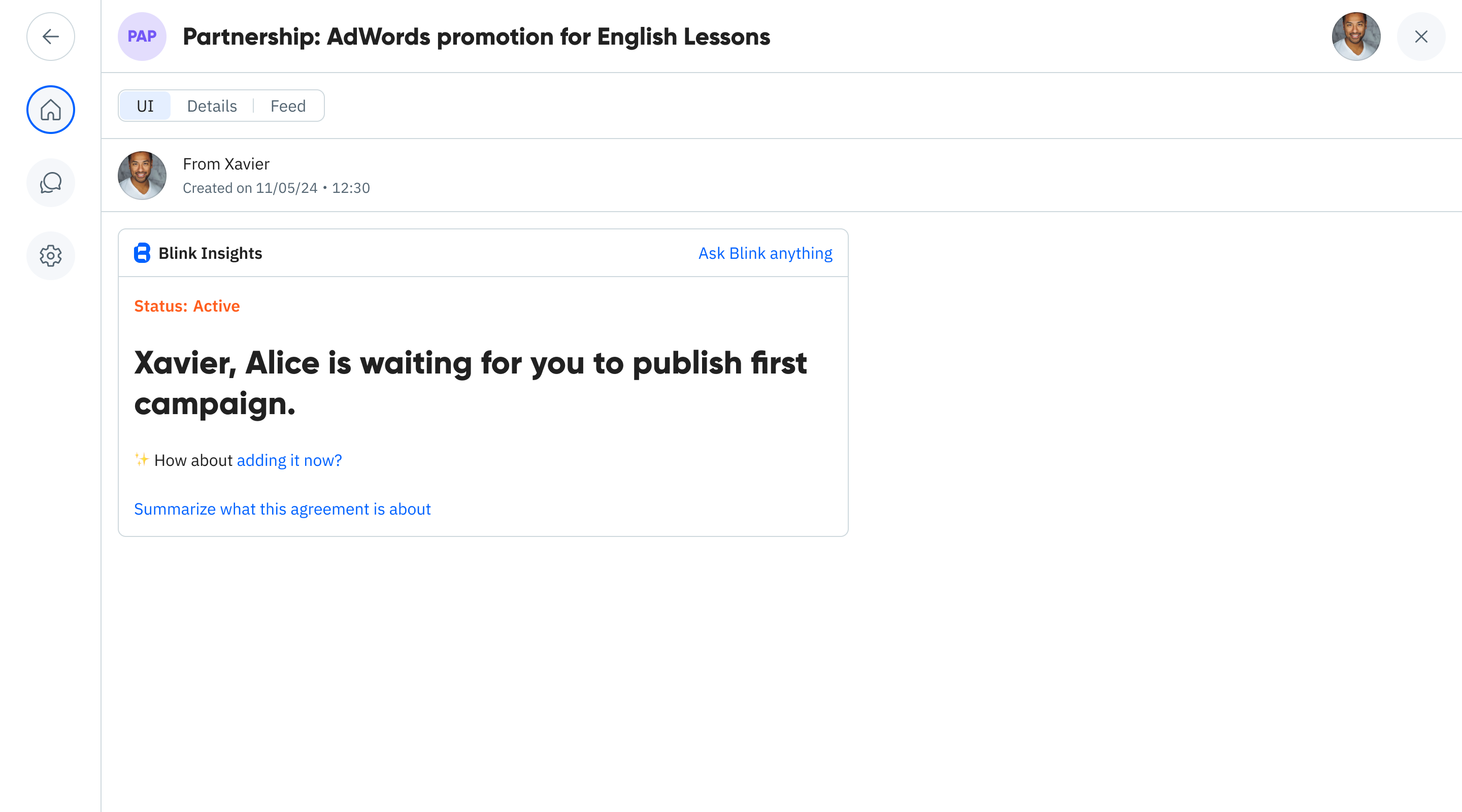

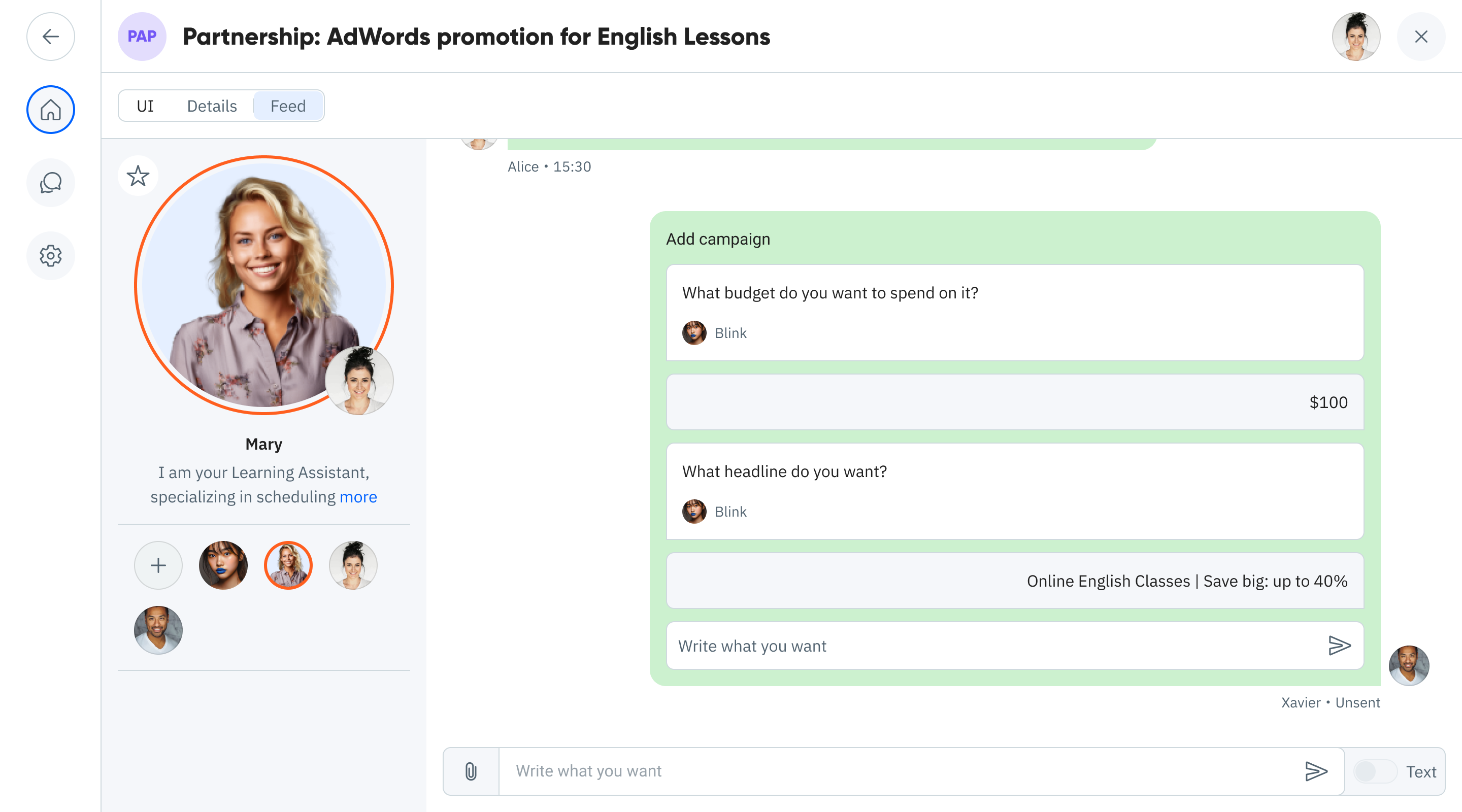

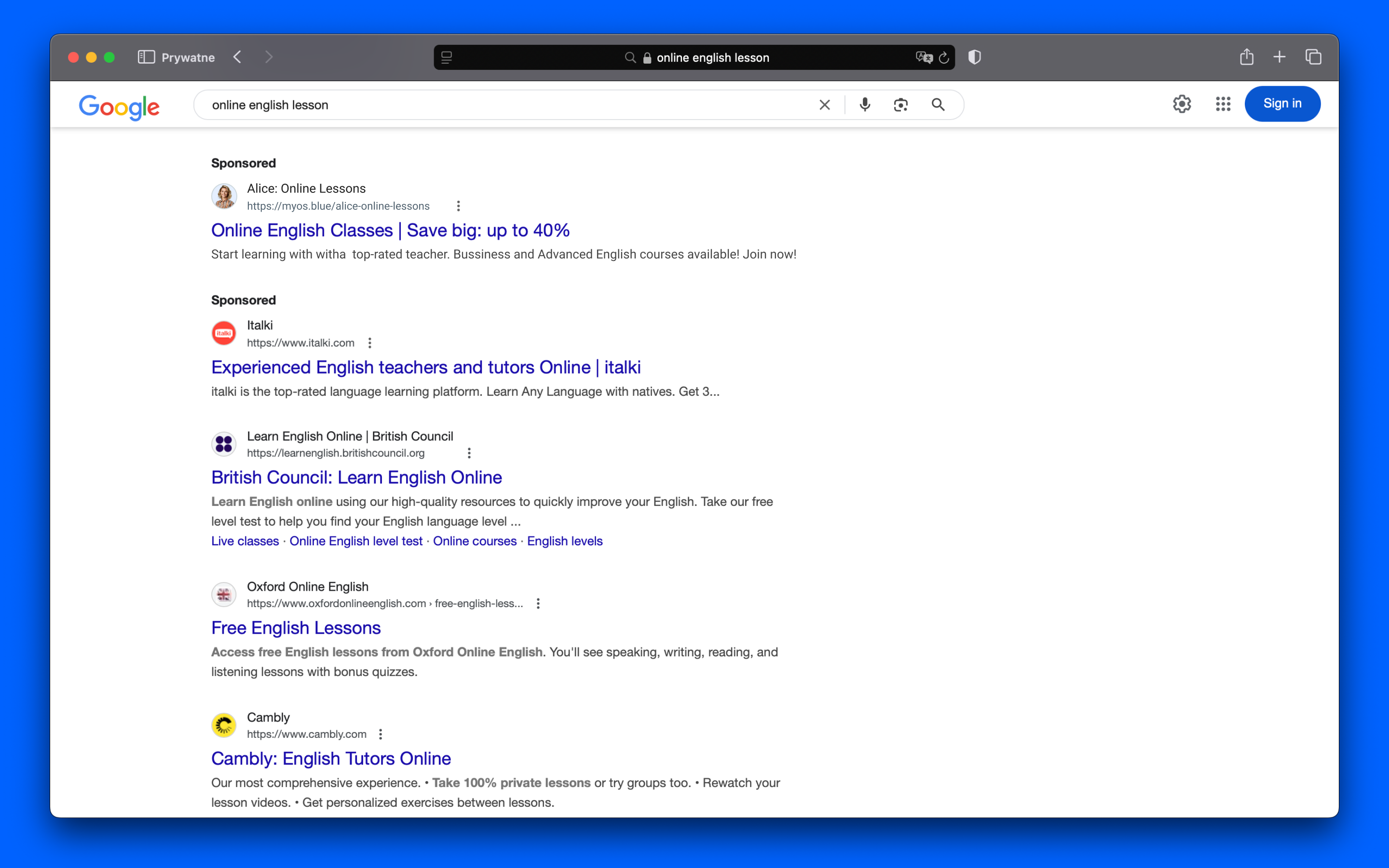

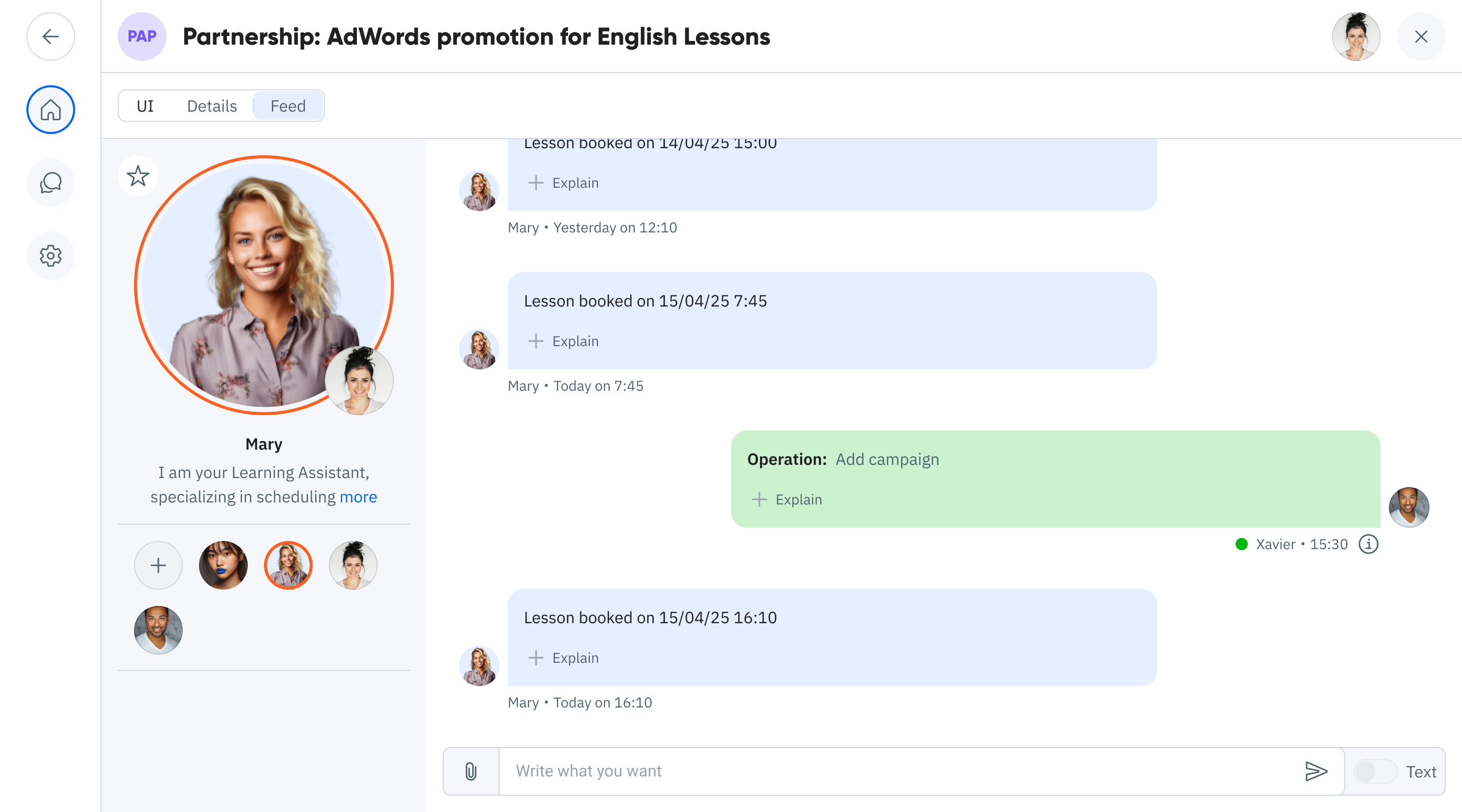

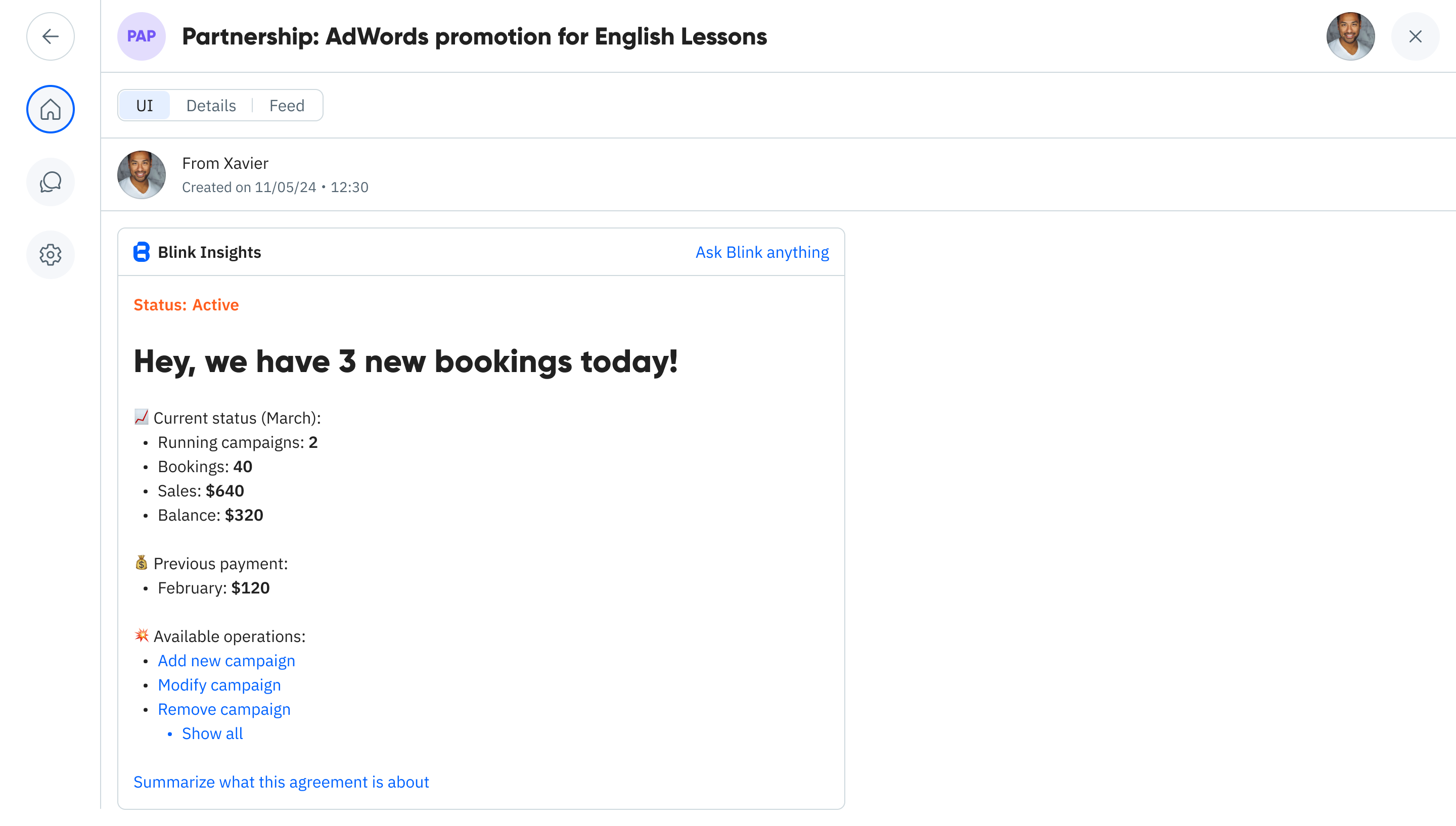

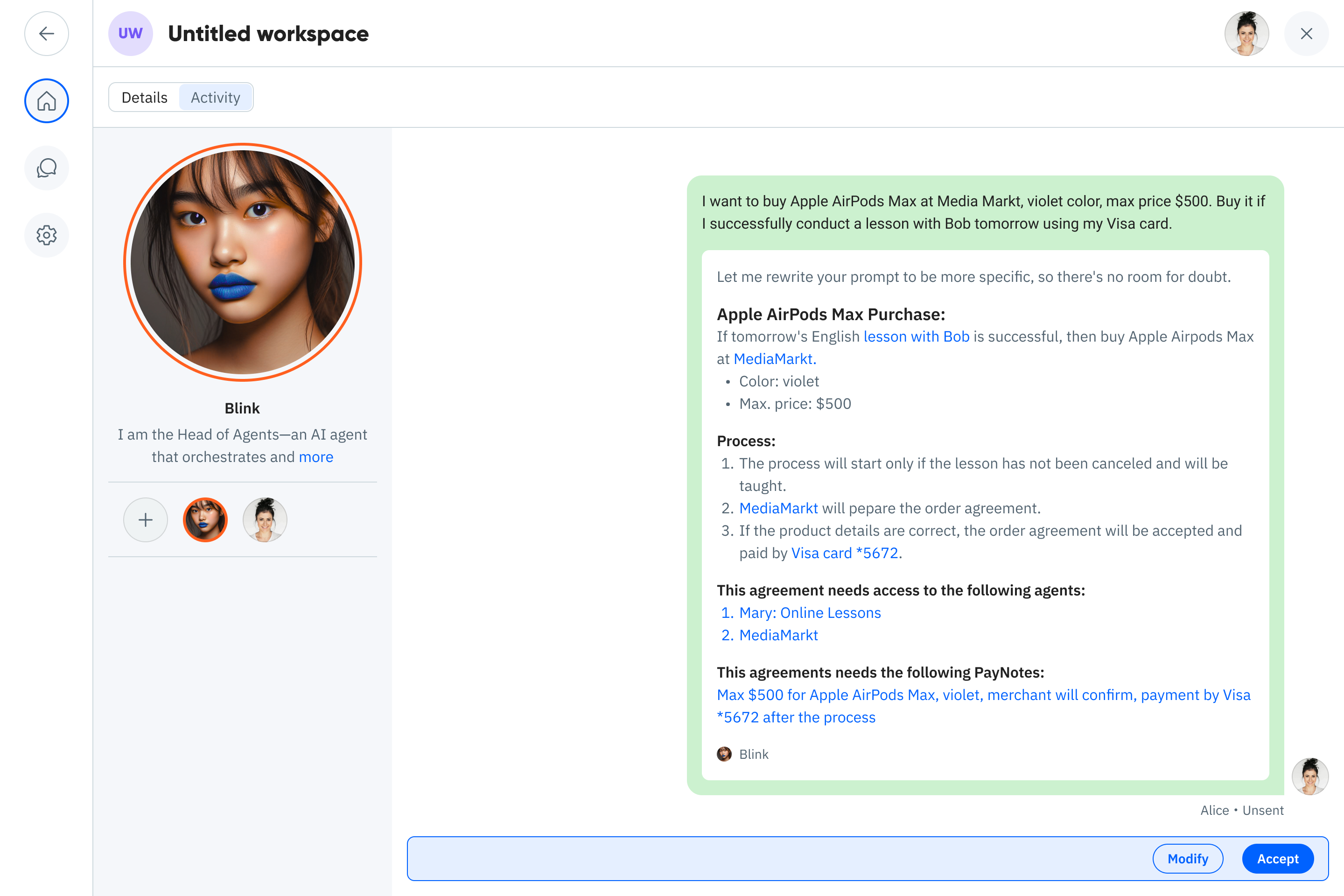

Because Blue docs are [generated from natural language by AI](../_prompt/unfinished/ai/document-creation), bespoke contracts take minutes, not weeks. Micro-partnerships, flash supply chains, ad-hoc revenue splits flourish—trust no longer drags. See the [Xavier ↔ Alice](./myos/collaboration-economy) example.

### C. AI-Hive Governance

Thousands of specialised agents can negotiate at silicon speed—**but nothing executes unless it lands on a timeline**. Guardian docs act as circuit-breakers, so humans keep the wheel while the hive runs 1000× faster. Speed for the bots, brakes for the people—built in.

## 6. Take-home sentence

> **Blue lets software speak in full sentences, wrap those sentences in a verifiable contract, and require every action to land on a signed timeline - so any processor you trust can enforce the deal exactly as written.**

Everything else - [MyOS dashboards](./myos/quick-start), [`/blue` endpoints](./integration/blue-endpoint), [channel-binding recipes](./contracts/channel-binding) - exists to make that sentence trivial to ship.

### Next stops

* **[Language primer](./language/introduction)** — BlueIds, structural types, extending the web of meaning.

* **[Contract guide](./contracts/introduction)** — workflows, channels, coordination strategies.

* **[Payment recipes](./payments/single-payment)** — delivery capture, usage billing, escrow layers.

* **[Quick-start](./myos/quick-start)** — post events, watch independent processors agree.

====================

DOCUMENT: AI Verdict on Blue

CATEGORY: root

URL: https://language.blue/docs/verdict

====================

# AI Verdict on Blue

The answers below were generated by Claude Sonnet 4 with thinking enabled and maximum token output. The methodology was simple: feed the complete Blue documentation (all pages and sections from this website) as context, then ask the question. These questions are designed to probe Blue's potential from every angle - technical, business, and strategic. You can [download the documentation + question template](/template.txt) and test any of these questions with your preferred LLM to get different perspectives on Blue's potential.

## Quick Assessment Questions

### Explain in one sentence: What is Blue and why should anyone care?

Blue is a universal language for digital documents that enables safe AI autonomy by turning agreements into executable code with built-in verification, eliminating trust barriers and the constant reinvention of basic data structures across systems.Add to Conversation

### What are the 3 most impressive things you found in this documentation? Give me bullet points with one sentence each and a link to the relevant section.

Here are the 3 most impressive things I found in the Blue documentation:

- **Universal Content Addressing with BlueId** - Blue solves the fundamental problem of reinventing data structures by creating a "web of meaning" where any concept gets a unique identifier based on its content, not location, enabling instant reuse across systems and organizations. [BlueId: Universal Content Addressing](./language/blue-id)

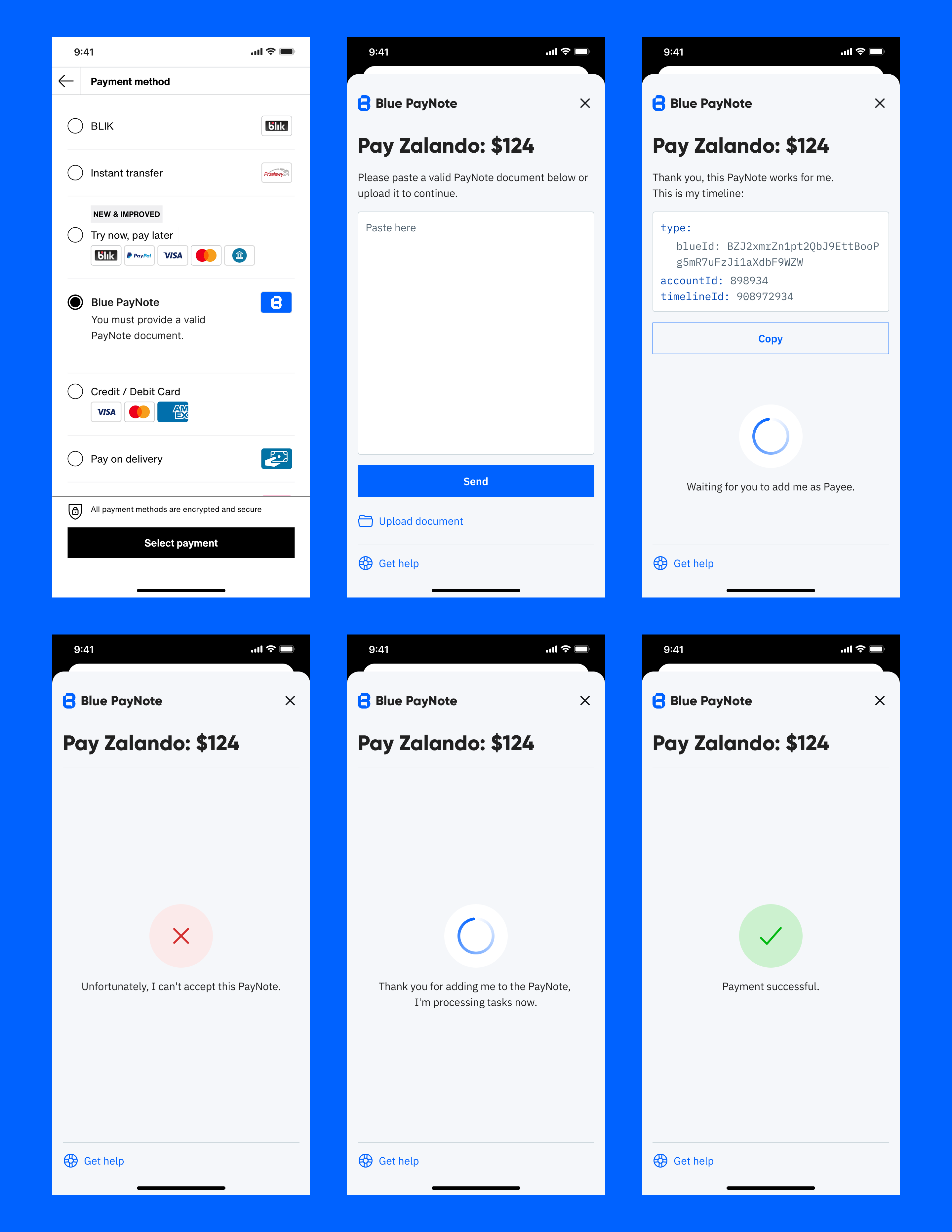

- **AI-Ready Payment Authorization with Blue PayNotes** - Blue enables AI agents to make bounded purchases without access to actual payment credentials by creating cryptographically signed "envelopes" with specific spending rules, solving the critical trust problem in AI commerce. [Blue PayNote](./payments/blue-paynote)

- **Programmable Trust with Multi-Party Verification** - Blue documents can embed executable rules that involve multiple trusted parties (banks, delivery services, inspectors) for verification, creating transparent processes where trust flows through the document itself rather than requiring participants to trust each other. [Smart Card Payment Examples](./payments/single-payment#example-guaranteed-delivery-discount-promise)

### Do you believe Blue will revolutionize human-AI interactions? Be critical - explain in maximum 2 sentences what excites you most and what concerns you most.

**What excites me most:** Blue's deterministic processing model and universal semantic language could genuinely solve the trust and interoperability problems that plague AI-human interactions today - the idea that AI agents can safely negotiate and execute complex agreements at machine speed while maintaining verifiable accountability through timelines is a breakthrough that could unlock entirely new forms of digital cooperation.

**What concerns me most:** The system's complexity and reliance on network effects for value creation present a classic bootstrapping challenge that could prevent adoption, and the potential for AI agents to execute sophisticated agreements at unprecedented speed, while powerful, introduces systemic risks that our current governance and oversight mechanisms aren't designed to handle.

## Business Impact & Vision

### If Blue becomes widely adopted, how might it change the relationship between banks, payment processors, and their customers? - explain in max 1000 chars

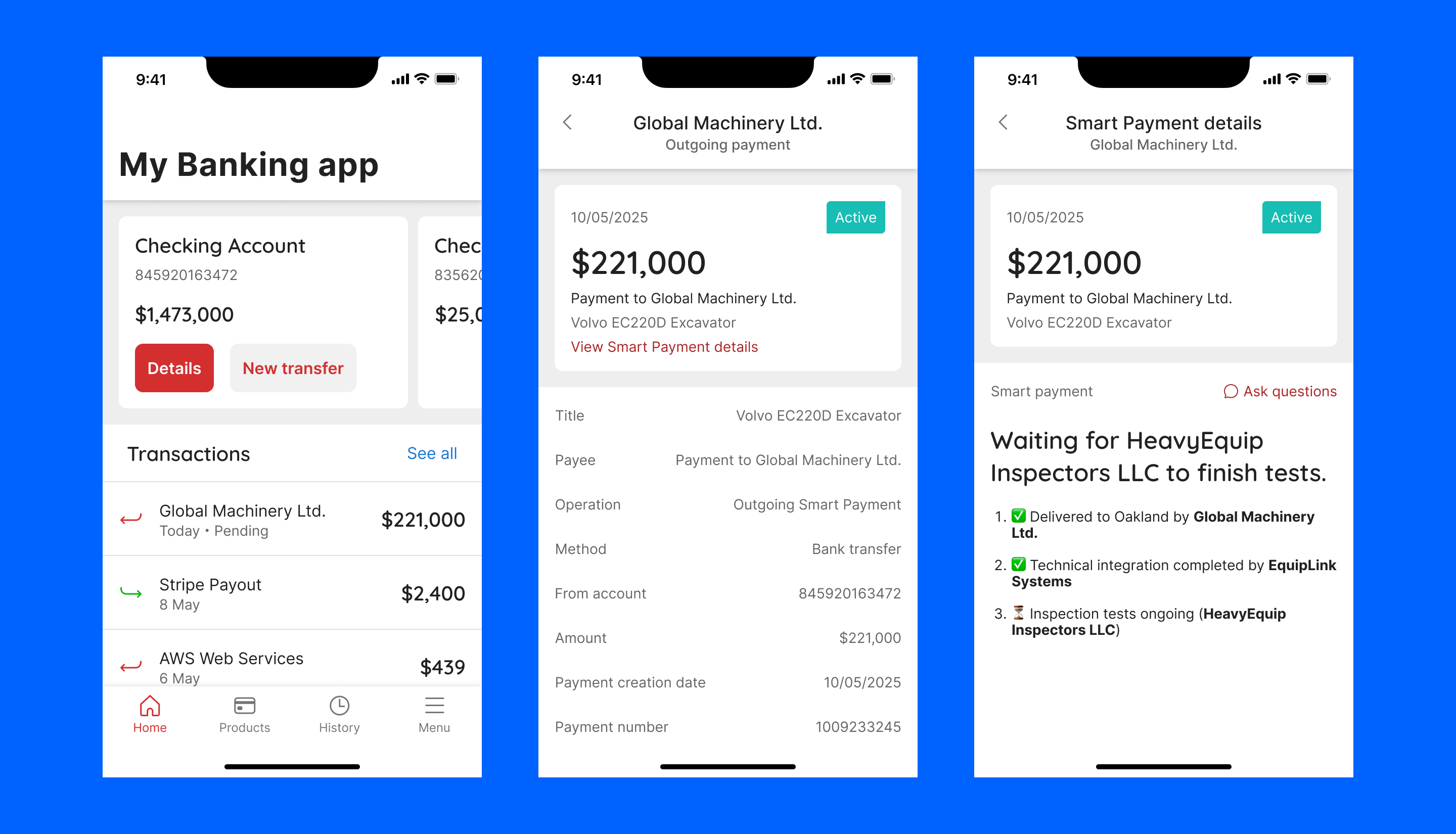

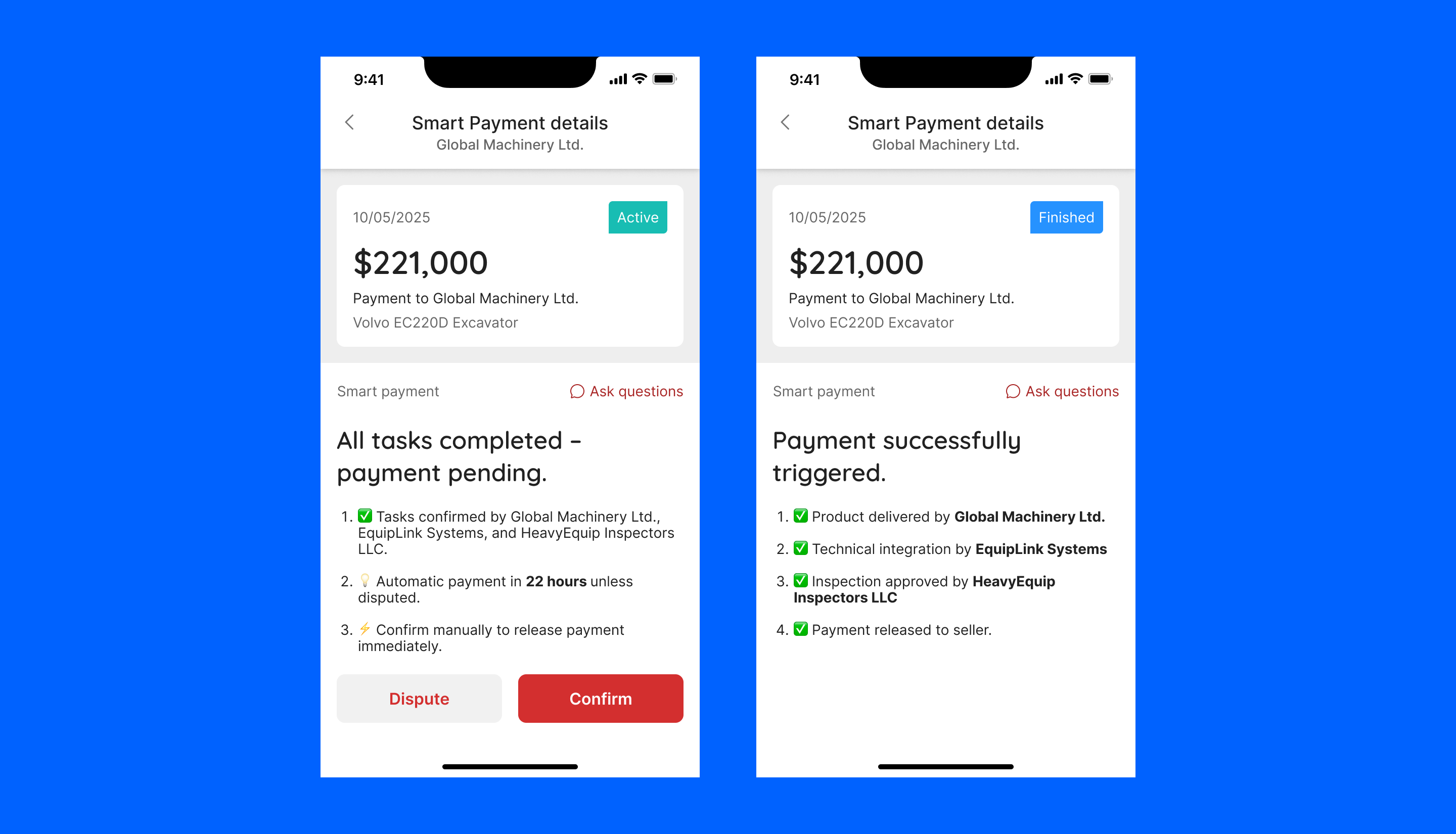

Banks & payment processors would evolve from **simple money movers** to **trust arbiters** - enforcing complex conditional agreements rather than just processing transactions. Their apps would transform into business intelligence platforms showing verification progress & relationship status, not just balances.

This creates new premium revenue streams: verification fees, conditional payment enforcement, automated escrow services. Instead of commoditized processing, they become integral to business operations.

Customers gain unprecedented guarantees through third-party verification (like "auto-refund if delivery takes >4 days, verified by DHL"). Merchants reduce chargebacks through executable terms rather than disputed promises.

The relationship shifts from "we move your money" to "we enforce your agreements" - making financial institutions central to business trust rather than just payment infrastructure.

### What specific problems does Blue solve that current blockchain/smart contract platforms cannot? - explain in max 1000 chars

Blue solves blockchain's core limitations:

**Privacy**: Personal timelines vs public ledgers - you control who sees what, like email

**Efficiency**: No mining/gas fees/global consensus - only document participants need to agree

**Flexible Trust**: Each document picks its own trust sources (Stripe, banks, DHL) vs forced blockchain consensus

**Real-world Integration**: Native external party inclusion vs complex oracle problems

**Composability**: Documents embed/reference others vs isolated smart contracts

**Accessibility**: AI converts natural language to Blue vs requiring specialized programming

**Multiple Realities**: Different valid perspectives vs enforced global state

**Selective Processing**: Only process relevant timelines vs entire network history

Blue gives you blockchain's verification guarantees without the baggage - like having email's privacy model but with executable, verifiable rules that any processor can independently confirm.

### How could Blue enable new business models that are impossible with today's technology stack? - explain in max 1000 chars

Blue enables **instant micro-partnerships** - strangers forming custom business relationships in minutes instead of months. Xavier can propose affiliate terms to Alice through AI, creating agreements that auto-execute without lawyers or complex integrations.

**AI-hive economy** emerges where thousands of agents negotiate, purchase services, and form partnerships autonomously using PayNotes - bounded payment authorization that lets AI spend money safely within precise rules.

**Programmable guarantees** transform customer trust - merchants can offer "80% discount if delivery is late" where payment processors automatically enforce promises, not just merchant goodwill.

**Distributed trust markets** replace central authorities - instead of trusting one party, create documents where banks verify payments, couriers verify delivery, inspectors verify quality - each enforcing their slice independently.

**Real-time revenue sharing** responds to live events without reconciliation - marketing spend automatically splits based on actual conversions, creative work pays based on usage metrics.

This creates a **collaboration economy** where forming trusted relationships becomes as easy as sending an email, enabling business models impossible when trust requires custom development.

## Technical Architecture

### How does Blue achieve deterministic processing across distributed systems without requiring blockchain consensus? - explain in max 500 chars

Blue achieves deterministic processing through:

1. **Content-addressable documents**: Identical BlueIds guarantee identical meaning across processors

2. **Deterministic contracts**: Same event inputs always produce identical document updates

3. **Timeline coordination**: Vector clocks & other mechanisms ensure all processors see events in identical order

4. **Independent verification**: Each processor reaches same conclusions without inter-processor communication

The key insight: consensus on event sequence + deterministic rules = identical outcomes without blockchain overhead.

### Explain how Blue's timeline system differs from traditional event sourcing or blockchain approaches. - explain in max 500 chars

Blue timelines are **personal** (like email) vs **global** (like blockchain). Each participant controls their own timeline and shares selectively, avoiding expensive consensus. Unlike event sourcing's single stream per aggregate, Blue enables multi-party verification through independent timelines that coordinate when needed, maintaining privacy while ensuring all processors reach identical conclusions.

### How does Blue handle the 'double-spending' problem for PayNotes without requiring global consensus? - explain in max 500 chars

Blue PayNotes are authorization documents, not currency itself. They prevent double-spending through:

1. **Single-use design**: Once status changes to "Paid", the PayNote becomes unusable

2. **Timeline verification**: All state changes are cryptographically recorded on timelines

3. **Payment method backing**: Actual payments use existing systems (cards/banks/crypto) with their own protections

4. **Document state tracking**: All processors can independently verify current PayNote status

No global consensus needed - just verifiable authorization lifecycle.

## Integration & Adoption

### What would it take for Blue to become the universal protocol for AI-to-AI commerce? - explain in max 500 chars

For Blue to become the universal AI-to-AI commerce protocol, it needs:

1. **Critical mass adoption** - Major AI platforms/agents using Blue

2. **Standard commerce document types** - Universal payment, contract, offer formats

3. **Trusted infrastructure** - Reliable timeline providers & processors AIs can depend on

4. **Easy integration** - Simple APIs for existing systems to add Blue support

5. **Regulatory clarity** - Legal frameworks for automated AI transactions

The technical foundation exists; it's about ecosystem adoption and standardization.

### How does Blue solve the trust problem between AI agents from different organizations? - explain in max 500 chars

Blue redirects trust from agents to documents. Instead of AI agents trusting each other, they trust verifiable document rules + signed timelines. All actions are cryptographically proven, any party can independently verify compliance, and trusted third parties can verify specific aspects. Bilateral trust becomes multilateral verification.

### Compare Blue with other agent frameworks available, choose categories and rate 0-10.

| Category | Blue | LangChain | CrewAI | Autogen | OpenAI API | AutoGPT |

|----------|------|-----------|---------|---------|------------|---------|

| **Financial Integration** | 9 | 2 | 1 | 1 | 1 | 1 |

| **Multi-party Coordination** | 9 | 3 | 7 | 8 | 2 | 2 |

| **Deterministic Execution** | 10 | 4 | 3 | 3 | 3 | 2 |

| **Universal Language** | 9 | 3 | 2 | 2 | 2 | 1 |

| **Trust & Verification** | 9 | 2 | 2 | 3 | 2 | 1 |

| **AI Payment Authorization** | 10 | 1 | 1 | 1 | 1 | 1 |

| **Cross-system Interoperability** | 8 | 6 | 4 | 5 | 4 | 3 |

| **Event-driven Architecture** | 9 | 5 | 4 | 4 | 3 | 3 |

| **Developer Adoption** | 3 | 9 | 6 | 6 | 8 | 7 |

| **Production Readiness** | 4 | 8 | 5 | 6 | 7 | 3 |

**Key Insights:**

- **Blue** dominates financial/trust categories but lacks adoption

- **LangChain** wins on adoption/production but weak on coordination

- **Autogen/CrewAI** excel at multi-agent coordination

- **OpenAI API** benefits from platform integration

- **AutoGPT** pioneered autonomous agents but lacks enterprise features

Blue's unique value proposition is AI-safe financial transactions and verifiable multi-party coordination—capabilities no other framework addresses.

### How does Blue relate to MCP (Model Context Protocol) and A2A (Agent-to-Agent) frameworks?

| Aspect | Blue | MCP (Model Context Protocol) | A2A Protocols |

|--------|------|------------------------------|---------------|

| **Primary Focus** | Verifiable business agreements | AI-tool connectivity | Agent communication |

| **Layer** | Application/Business Logic | Interface/Transport | Communication/Protocol |

| **Trust Model** | Cryptographic verification | Tool provider trust | Varies by implementation |

| **Determinism** | Guaranteed identical outcomes | Non-deterministic (AI responses) | Usually non-deterministic |

| **Financial Capability** | Native payment documents | None | Limited/external |

| **Multi-party Coordination** | Built-in with timelines | Single AI ↔ tools | Peer-to-peer |

| **State Management** | Document-centric with checkpoints | Stateless | Varies |

| **Interoperability** | Universal document language | Tool-specific schemas | Protocol-specific |

**MCP + Blue:**

- MCP could serve as the interface layer for AIs to read/write Blue documents

- AI agents use MCP to access Blue document processors

- Blue documents define the rules; MCP enables AI interaction with those rules

- Example: AI uses MCP to submit a PayNote to a Blue-enabled payment processor

**A2A + Blue:**

- Blue provides the universal language A2A protocols lack

- Blue documents become the "contract layer" for agent interactions

- Blue's deterministic processing ensures A2A agreements execute predictably

- Blue timelines provide verifiable audit trails for A2A communications

**Key Insight:**

Blue operates at a higher abstraction level - it's the "legal system" for AI interactions, while MCP/A2A are the "communication infrastructure." Blue documents could define what agents are allowed to do, while MCP/A2A handle how they communicate.

### How does Blue's approach to multi-party agreements compare to traditional legal contracts and escrow services? - explain in max 500 chars

Blue transforms static legal text into executable code. Unlike traditional contracts requiring courts for enforcement, Blue documents automatically execute when conditions are met. Unlike escrow services with single trusted parties, Blue distributes trust across multiple verifiers. This prevents disputes rather than resolving them, reduces costs, and enables real-time execution of complex multi-party agreements.Add to Conversation

## Future Scenarios

### If executed properly, what role could Blue play in the forthcoming AI revolution, and how important might that role be? - explain in max 1000 chars

Blue could become the foundational protocol for safe AI autonomy - like HTTP for the web, but for AI coordination. It solves the critical "trust problem" that prevents AI agents from acting independently: how do you give an AI spending power without losing control?

Blue's PayNotes create cryptographically bounded authorization ("spend max $500 on vegetables"), while verifiable timelines ensure every AI action is accountable. Multiple AIs can coordinate on complex tasks through shared documents with transparent rules.

Most importantly, Blue enables a new AI economy where agents can safely trade services, negotiate partnerships, and handle transactions - all within human-defined boundaries. Instead of proprietary AI silos, we get interoperable agents speaking a universal language.

The role could be transformational: Blue might enable the shift from "AI as tool" to "AI as autonomous economic participant" while maintaining human control through verifiable, programmable constraints. This could unlock the full potential of AI collaboration while solving the safety and trust challenges that currently limit AI deployment.

If widely adopted, Blue could be the infrastructure layer that makes the AI economy possible.

### In a world where thousands of AI agents are making micro-transactions, how does Blue prevent chaos while maintaining speed? - explain in max 1000 chars

Blue prevents AI chaos through **bounded authorization** and **verifiable timelines**:

**Speed**: No global consensus required - each document processes independently. Thousands of agents can operate simultaneously without bottlenecks.

**Control**: AI agents get **Blue PayNotes** (bounded payment auth) instead of raw credentials. Like giving a child $20 for "vegetables only" - precise spending limits with automatic enforcement.

**Accountability**: Every action must land on a **signed timeline**. No invisible behavior - all agent actions are cryptographically verifiable.

**Circuit Breakers**: **Guardian documents** act as emergency stops. Humans set the boundaries; AIs operate at silicon speed within them.

**Deterministic Rules**: Document contracts ensure predictable outcomes. Multiple processors always reach identical conclusions without coordination overhead.

Result: **AI hive economy** with human-defined guardrails - thousands of agents transacting safely at machine speed while humans maintain ultimate control through document rules rather than real-time oversight.

### What role could Blue play in enabling autonomous economic agents (AI companies) to operate independently? - explain in max 1000 chars

Blue enables AI agents to operate autonomously by providing:

**Bounded Payment Authority**: AI agents can make purchases using Blue PayNotes - cryptographically secured "payment envelopes" with specific spending rules, eliminating need for raw payment credentials.

**Trustless Verification**: Documents contain executable rules that all parties independently verify, so AI agents don't need to trust each other - they trust the shared verification process.

**Automatic Agreements**: AI can negotiate partnerships, revenue shares, and service contracts through Blue documents that execute automatically when conditions are met.

**Cryptographic Accountability**: Every AI action gets recorded on verifiable timelines, creating tamper-proof audit trails without requiring central oversight.

**Multi-Agent Coordination**: Channel-based event systems let thousands of AI agents collaborate at machine speed while maintaining deterministic outcomes.

This creates an "AI economy" where agents can safely transact, form partnerships, and provide services to each other with minimal human intervention - trust flows through verification, not reputation.

====================

DOCUMENT: Introduction to Blue Language

CATEGORY: language

URL: https://language.blue/docs/language/introduction

====================

# Introduction to Blue Language

## The Problem of Reinvention

How many times have you defined a `Person` class in your career?

```java

// Java

public class Person {

private String name;

private String surname;

private int age;

// And so on...

}

```

```typescript

// TypeScript

interface Person {

name: string;

surname: string;

age: number;

// And so on...

}

```

```python

# Python

class Person:

def __init__(self, name, surname, age):

self.name = name

self.surname = surname

self.age = age

# And so on...

```

Every API, every service, every application reinvents these basic types. We have schemas, DTOs, POJOs, models, and interfaces—all representing the same concepts in slightly different ways.

Solutions like Schema.org tried to standardize these definitions, but they weren't flexible enough or easy enough to integrate with existing systems. The problem persists: we lack a universal language for describing digital objects.

## A Universal Language for Digital Objects

We believe that clear communication requires a common language—not just for simple API requests and responses, but for complex digital relationships, contracts, and behaviors. That's why Blue is the **B**asic **L**anguage that **U**nifies **E**xperience.

Blue lets you define an object once and use it everywhere, across systems, programming languages, and organizations.

## The Core Idea: Content-Addressable Types

The key innovation in Blue is that every document has a unique content hash called a **blueId**. This is essentially a single word that uniquely represents specific content.

You can try it yourself at [web.blue/blue-id-calculator](https://web.blue/blue-id-calculator)

Let's start with a simple type definition:

```yaml

name: Simple Amount

amount:

type: Double

currency:

type: Text

```

This has the blueId `FgHZjSNb8X9zty82ps4eRkVVuhpPG6ajSGvG6HKKrhUq` - a unique fingerprint of its content.

Now we can define another type that uses this one:

```yaml

name: Person

age:

type: Integer

spent:

type:

blueId: FgHZjSNb8X9zty82ps4eRkVVuhpPG6ajSGvG6HKKrhUq # Simple Amount

```

This `Person` type has blueId `GRwTYsr32pieyWQH1PAFrQAWBUCbKivH4K9yYC8hFHaU`.

## Creating Instances with Types

Now we can create an actual person:

```yaml

name: Alice

type:

blueId: GRwTYsr32pieyWQH1PAFrQAWBUCbKivH4K9yYC8hFHaU # Person

age: 25

spent:

amount: 27.15

currency: USD

```

This instance has blueId `3JTd8soWugBAfxBAYKuScmmvbdgFw9SVp7fPdJgrAUVn`.

## The Magic of Meaning-Based Identity

Here's where it gets interesting. You'll get the _exact same blueId_ if you expand all the type references:

```yaml

name: Alice

type:

name: Person

age:

type: Integer

spent:

type:

name: Simple Amount

amount:

type: Double

currency:

type: Text

age: 25

spent:

amount: 27.15

currency: USD

```

This produces the same blueId: `3JTd8soWugBAfxBAYKuScmmvbdgFw9SVp7fPdJgrAUVn`

This is a core principle of Blue: **documents with the same meaning have the same blueId, regardless of how they're represented.**

## Simplifying with Names

For convenience, you can use type names directly with the `blue` directive:

```yaml

blue: https://language.blue/simple.blue

name: Alice

type: Person

age: 25

spent:

amount: 27.15

currency: USD

```

This tells processors where to find the type definitions. Throughout this documentation, we'll use this convention to keep examples clean and focused.

## Extension and Type Safety

In Blue, every document can be a type for another document:

```yaml

name: Alice Extended

type: Alice

smiling: true

friends:

type: List

itemType: Person

```

Every `Alice Extended` must be an `Alice`. If fields like `age` or `spent` were already set in `Alice`, they cannot be overwritten—you can only add new attributes or complete those that weren't specified earlier.

This creates a strong type system without requiring a centralized registry of types.

## Language Integration

Blue isn't just a document format—it integrates with your programming language of choice. For Java:

```java

@TypeBlueId("FgHZjSNb8X9zty82ps4eRkVVuhpPG6ajSGvG6HKKrhUq")

@AllArgsConstructor

public class SimpleAmount {

Double amount;

String currency;

}

@TypeBlueId("GRwTYsr32pieyWQH1PAFrQAWBUCbKivH4K9yYC8hFHaU")

@AllArgsConstructor

public class Person {

String name;

Integer age;

SimpleAmount spent;

}

// Creating and using a Blue document

Person alice = new Person("Alice", 25, new SimpleAmount(27.15, "USD"));

Blue blue = new Blue();

// Calculate the blueId

String blueId = blue.calculateBlueId(alice);

assert blueId.equals("3JTd8soWugBAfxBAYKuScmmvbdgFw9SVp7fPdJgrAUVn");

// Convert to YAML

String yaml = blue.objectToYaml(alice);

```

The calculated blueId will match the one from our YAML examples, and the generated YAML will be semantically equivalent.

## Reusing Existing Types

Rather than defining all types yourself, you can import libraries from [repo.blue](https://repo.blue) and other sources. This gives you immediate access to standard types like `Person`, `Address`, `Payment`, and more specialized industry-specific types.

For popular types, language-specific libraries are available:

```bash

# JavaScript/TypeScript

npm install @blue-repository/identity-types

# Java

blue.repositoryidentity-types1.0.0

# Python

pip install blue-repository-identity-types

```

This eliminates the need to reinvent common structures and ensures consistent definitions across systems and organizations.

====================

DOCUMENT: Type System and Extension

CATEGORY: language

URL: https://language.blue/docs/language/types

====================

# Type System and Extension

In the previous section, we introduced the basic concept of types in Blue. Now, let's explore the type system more deeply, seeing how it enables powerful inheritance and extension models while maintaining strict compatibility.

:::note

For clarity, most examples in this documentation omit the `blue:` directive that would typically be required in practice. Learn more about this directive in the [Blue Directive](./blue-directive) section.

:::

## Building Type Hierarchies

Blue's type system allows you to create sophisticated inheritance hierarchies where more specialized types extend general ones:

```yaml

name: Product

price:

amount:

type: Number

contracts:

amountValidator:

type: Schema Validator

minimum: 0

currency:

type: ISO-4217 Currency Code

sku:

type: Text

```

The `contracts` section introduces an important Blue concept: contracts define special behaviors for documents or fields. Here, the Schema Validator contract enforces constraints on the `price.amount` field. You'll learn more about contracts in the [Contracts section](../contracts/introduction).

Note that all fields in Blue are optional by default. If you want to make a field required, you need to add that constraint explicitly:

```yaml

name: Electronics

type: Product

manufacturer:

type: Text

contracts:

requiredFieldValidator:

type: Schema Validator

required: true # This field must be present

```

## Specialization with Constraints

Let's create more specialized product types by adding both fields and constraints:

```yaml

name: Smartphone

type: Electronics

screenSize:

type: Number

contracts:

screenSizeValidator:

type: Schema Validator

minimum: 3.5

maximum: 7.5

operatingSystem:

type: Text

contracts:

osOptionsValidator:

type: Schema Validator

options: ['iOS', 'Android']

```

You can also define field types separately for reuse:

```yaml

name: Operating System

type: Text

contracts:

osOptionsValidator:

type: Schema Validator

options: ['iOS', 'Android']

---

name: Smartphone

type: Electronics

screenSize:

type: Number

contracts:

screenSizeValidator:

type: Schema Validator

minimum: 3.5

maximum: 7.5

operatingSystem:

type: Operating System

```

Both approaches achieve the same result, giving you flexibility in how you organize your types.

Let's continue building our hierarchy:

```yaml

name: iPhone

type: Smartphone

operatingSystem: iOS # Fixed value for all iPhones

model:

type: Text

contracts:

modelValidator:

type: Schema Validator

required: true

```

## Inheriting and Adding Contracts

When you extend a type, you inherit all its contracts. To add additional constraints to fields, use new contracts with descriptive names:

```yaml

name: Premium Smartphone

type: Smartphone

price:

amount:

contracts:

premiumPriceValidator:

type: Schema Validator

minimum: 599.99 # Additional constraint on price amount

```

When resolved, the `price.amount` field will have both the `amountValidator` from `Product` (requiring ≥ 0) and the `premiumPriceValidator` from `Premium Smartphone` (requiring minimum 599.99). Together, these effectively restrict price to be minimum 599.99 if present.

This ability to progressively add constraints is powerful for modeling domain-specific rules that build upon more general ones.

## Multi-Level Inheritance

Blue supports multi-level inheritance, allowing you to create deeply specialized types:

```yaml

name: iPhone 14

type: iPhone

model: '14' # Fixed value for all iPhone 14s

storage:

type: Text

contracts:

storageOptionsValidator:

type: Schema Validator

options: ['128GB', '256GB', '512GB', '1TB']

```

When we create an instance:

```yaml

name: iPhone 14 128GB

type: iPhone 14

storage: '128GB'

price:

amount: 799.99

currency: USD

color: Blue

```

The document inherits all properties from the entire inheritance chain, including the fixed values `operatingSystem: iOS` from the `iPhone` type and `model: "14"` from the `iPhone 14` type.

## Type Enforcement Rules

The Blue type system enforces strict rules during inheritance:

1. **No Overriding Values**: If a parent type defines a field value, child types inherit exactly that value and cannot change it

2. **Type Compatibility**: If a parent type defines a field's type, child types must use the same or a compatible subtype

3. **Additive Properties Only**: Child types can add new properties but cannot remove inherited ones

For example, these would be invalid:

```yaml

# INVALID - trying to change inherited fixed value

name: InvalidiPhone

type: iPhone

operatingSystem: Android # Cannot override 'iOS' value from iPhone type

```

```yaml

# INVALID - trying to change the type of 'price.amount'

name: InvalidProduct

type: Product

price:

amount:

type: Text # Cannot override Number type from Product

```

These strict rules ensure that a fundamental principle holds true: **Every instance of a subtype is also a valid instance of its parent type**. This means every "iPhone 14" is an "iPhone", every "iPhone" is a "Smartphone", and so on up the type hierarchy.

This principle—known as the Liskov Substitution Principle in object-oriented design—is strictly enforced in Blue's type system.

## Working with Collections

Blue supports two primary collection types—lists and dictionaries—that let you build complex data structures:

```yaml

# A product bundle with a list of items

name: Starter Tech Bundle

bundlePrice:

amount: 1299.99

currency: USD

items:

type: List

itemType: Product

- type: Smartphone

name: iPhone 14

model: "14"

storage: "128GB"

price:

amount: 799.99

currency: USD

- type: Electronics

name: Wireless Earbuds

manufacturer: Apple

price:

amount: 249.99

currency: USD

- type: Electronics

name: Wireless Charger

manufacturer: Belkin

price:

amount: 49.99

currency: USD

```

```yaml

# Inventory management with product counts

name: Store Inventory

stock:

type: Dictionary

keyType: Text

valueType: Integer

'iPhone 14 128GB Black': 23

'iPhone 14 256GB Black': 15

'iPhone 14 Pro 128GB Silver': 8

'Samsung Galaxy S23': 12

```

These examples demonstrate how collections enable complex data modeling while maintaining Blue's strict typing:

- The `items` list can contain any `Product` subtype

- The `stock` dictionary maps product names to their inventory counts

## Programming Language Integration

Blue types map cleanly to object-oriented programming concepts:

```java

// Define classes mapped to Blue types

@TypeBlueId("Price-BlueId")

public class Price {

private Double amount;

private String currency;

}

@TypeBlueId("Product-BlueId")

public class Product {

private String name;

private String description;

private Price price;

private String sku;

}

// Specialization with enum for type-safe values

public enum OperatingSystem {

IOS, ANDROID

}

@TypeBlueId("Smartphone-BlueId")

public class Smartphone extends Product {

private Double screenSize;

private OperatingSystem operatingSystem;

}

// Converting between YAML and Java objects

String phoneYaml = """

name: iPhone 14

type: Smartphone

price:

amount: 799.99

currency: USD

screenSize: 6.1

operatingSystem: IOS

""";

// Convert YAML to Java object

Blue blue = new Blue(nodeProvider);

Smartphone phone = blue.yamlToObject(phoneYaml, Smartphone.class);

// Verify the conversion

assert phone.getName().equals("iPhone 14");

assert phone.getPrice().getAmount() == 799.99;

assert phone.getPrice().getCurrency().equals("USD");

assert phone.getOperatingSystem() == OperatingSystem.IOS;

```

This bidirectional mapping ensures that Blue documents can be seamlessly converted to strongly-typed language objects and back again.

## Resolving Documents

When a Blue processor encounters a document with type references, it performs "resolution" to fully expand all types:

```yaml

# Before resolution

name: iPhone 14 Pro 256GB Black

type: iPhone 14

storage: "256GB"

color: Black

price:

amount: 1099.99

currency: USD

# After resolution (blueId: 9pq5Enj1LFqQskQShJwK9ERU8u2CLfnP2KbeaufYJyNm)

name: iPhone 14 Pro 256GB Black

type:

name: iPhone 14

type:

name: iPhone

type:

name: Smartphone

type:

name: Electronics

type:

name: Product

price:

amount:

type: Number

contracts:

amountValidator:

type: Schema Validator

minimum: 0

currency:

type: ISO-4217 Currency Code

sku:

type: Text

manufacturer:

type: Text

contracts:

requiredFieldValidator:

type: Schema Validator

required: true

screenSize:

type: Number

contracts:

screenSizeValidator:

type: Schema Validator

minimum: 3.5

maximum: 7.5

operatingSystem: iOS

model: "14"

storage:

type: Text

contracts:

storageOptionsValidator:

type: Schema Validator

options: ["128GB", "256GB", "512GB", "1TB"]

storage: "256GB"

color: Black

price:

amount: 1099.99

currency: USD

```

Resolution creates a fully expanded document that contains all inherited properties and constraints. This ensures consistent validation and behavior across different processors.

You can perform resolution using the Blue library:

```java

// Parse the document

Blue blue = new Blue(nodeProvider);

Node node = blue.yamlToNode(yaml);

// Resolve types

Node resolved = blue.resolve(node);

// Convert back to YAML

String resolvedYaml = blue.nodeToYaml(resolved);

```

## Verifying Type Relationships

You can check if one document is a valid subtype of another:

```java

boolean isIPhone = blue.nodeMatchesType(myPhone, iPhone); // true

boolean isSmartphone = blue.nodeMatchesType(myPhone, smartphone); // true

boolean isProduct = blue.nodeMatchesType(myPhone, product); // true

```

This enables type-safe operations in your applications when working with Blue documents.

====================

DOCUMENT: BlueId: Universal Content Addressing

CATEGORY: language

URL: https://language.blue/docs/language/blue-id

====================

# BlueId: Universal Content Addressing

In previous sections, we explored Blue's type system and document inheritance. Now, let's focus on the heart of Blue's referencing system: the BlueId—a unique identifier based on content rather than location.

## Beyond Traditional Identifiers

Traditional identifiers tell you where to find something:

- URLs point to server locations

- Database IDs reference rows in tables

- UUIDs are assigned to specific objects

In contrast, a BlueId represents what something is—its actual content and meaning. This creates a fundamentally different way of referencing digital objects:

```yaml

# Traditional approach

{

"id": "8f7d3c2e-1a5b-4f9c-8d7e-6b2c1a3f4e5d",

"name": "iPhone 14",

"price": 799.99

}

# Blue approach

name: iPhone 14

price: 799.99

# This document's BlueId is HxvqaVPopn9w8CuVAp4YpVbiaEG25MmZY1UVZ4nA1hxV

```

The BlueId is not stored in the document—it's derived from the content itself.

## The Web of Meaning

Every BlueId represents a "word" in a vast language of digital content:

- Each document node has its own BlueId

- The same content always has the same BlueId

- BlueIds can reference content across systems and organizations

- Multiple documents can incorporate the same content via BlueId

This creates a web of interconnected content where references are based on meaning, not location:

Traditional integration approaches require us to constantly reinvent and redefine our data models. It's like meeting new people and having to teach them the words for "apple," "orange," and "dog" every time. Blue offers a different approach—a shared semantic web where concepts are universally addressable.

Instead of trading isolated schemas and models, we're building a collective knowledge network. Any system can reference any node in this web without prior coordination. Your "Person" type can be immediately understood and used by others without custom mapping or translation.

This network is continuously enriched as more systems adopt Blue. Common patterns naturally emerge, and specialized domains can extend standard types without breaking compatibility. It's a living language that grows organically with use.

## BlueId Calculation

BlueId is a content hash calculated for every document using a bottom-up approach, starting from the deepest layer and propagating upwards. Technically, it's a base58-encoded SHA-256 hash of canonicalized JSON (following RFC8785 standards) for each node in the document. This ensures:

- Uniqueness: Any change in the content results in a new BlueId

- Integrity: Documents reference each other unambiguously

- Interconnectedness: Documents can be linked, forming a web of interconnected content

The specific normalization procedures and implementation details are available in the [Specification: BlueId Calculation](../advanced/formal-specification.md#blueid-calculation) section.

## Walking the Blue Web

One of Blue's powerful capabilities is exploring this semantic web by retrieving referenced content as needed. Starting with any BlueId, you can "extend" outward to explore connected nodes.

For example, with our "Alice" document from earlier:

```yaml

# Starting point - just the BlueId

blueId: 3JTd8soWugBAfxBAYKuScmmvbdgFw9SVp7fPdJgrAUVn

```

We can extend one level to see the immediate properties:

```java

Node node = blue.blueIdToNode("3JTd8soWugBAfxBAYKuScmmvbdgFw9SVp7fPdJgrAUVn");

Node oneLevelExtended = blue.extend(node, PathLimits.withSinglePath("/*"));

```

Which produces:

```yaml

name: Alice

type:

blueId: GRwTYsr32pieyWQH1PAFrQAWBUCbKivH4K9yYC8hFHaU # Person

age: 25

spent:

blueId: HB8H2fLKAVZcEcqh19HiTehXjy25d22MMF6wAVvDzC4i # 27.15 USD

```

We can explore specific paths more deeply:

```java

Node spentExtended = blue.extend(node, PathLimits.withSinglePath("/spent/*"));

```

```yaml

name: Alice

type:

blueId: GRwTYsr32pieyWQH1PAFrQAWBUCbKivH4K9yYC8hFHaU # Person

age: 25

spent:

amount: 27.15

currency: USD

```

## Practical Example: E-Commerce System

Let's explore a more complex real-world example showing how BlueIds connect multiple documents in an e-commerce system:

```yaml

# Customer order with BlueId references

name: Order #12345

date: 2023-06-15

customer:

blueId: 3JTd8soWugBAfxBAYKuScmmvbdgFw9SVp7fPdJgrAUVn # Alice

items:

- product:

blueId: HxvqaVPopn9w8CuVAp4YpVbiaEG25MmZY1UVZ4nA1hxV # iPhone 14

quantity: 1

- product:

blueId: 8xYi5Svou5DVawB7CDEGuZitUGFChRYcJUF67bQ3NfXt # Wireless Earbuds

quantity: 2

catalog:

blueId: kL9mN0pQrStUvWxYz1A2B3C4D5E6F7G8H4FGh7j8 # Full product catalog

```

In this example, the order references:

- A customer profile (Alice)

- Individual products being ordered

- The entire product catalog (potentially thousands of products)

The order document remains lightweight because it only contains BlueId references to these larger entities. If we need customer details, we can extend just that path:

```java

Node orderWithCustomer = blue.extend(order, PathLimits.withSinglePath("/customer/**"));

```

```yaml

name: Order #12345

date: 2023-06-15

customer:

name: Alice

type:

name: Person

# Full Person type definition

age: 25

spent:

amount: 27.15

currency: USD

items:

- product:

blueId: HxvqaVPopn9w8CuVAp4YpVbiaEG25MmZY1UVZ4nA1hxV # iPhone 14

quantity: 1

- product:

blueId: 8xYi5Svou5DVawB7CDEGuZitUGFChRYcJUF67bQ3NfXt # Wireless Earbuds

quantity: 2

catalog:

blueId: kL9mN0pQrStUvWxYz1A2B3C4D5E6F7G8H4FGh7j8 # Full product catalog

```

## Extension Risk Management

Extend carefully! Some BlueIds can represent enormous amounts of data:

- The `catalog` BlueId in our example might contain thousands of products, each with detailed specifications

- A BlueId representing Wikipedia would contain millions of interconnected articles

- A [Timeline](../timelines/introduction) of an active user could contain years of activity records

As in real life, you rarely need to download all knowledge—just the parts relevant to your current task. Blue's extension mechanism lets you precisely control how much data you retrieve.

## web.blue: The Blue Explorer

Web.blue is a website that helps you explore the Blue web. You can provide your own node providers—components that map BlueIds to documents—and visualize content in multiple ways:

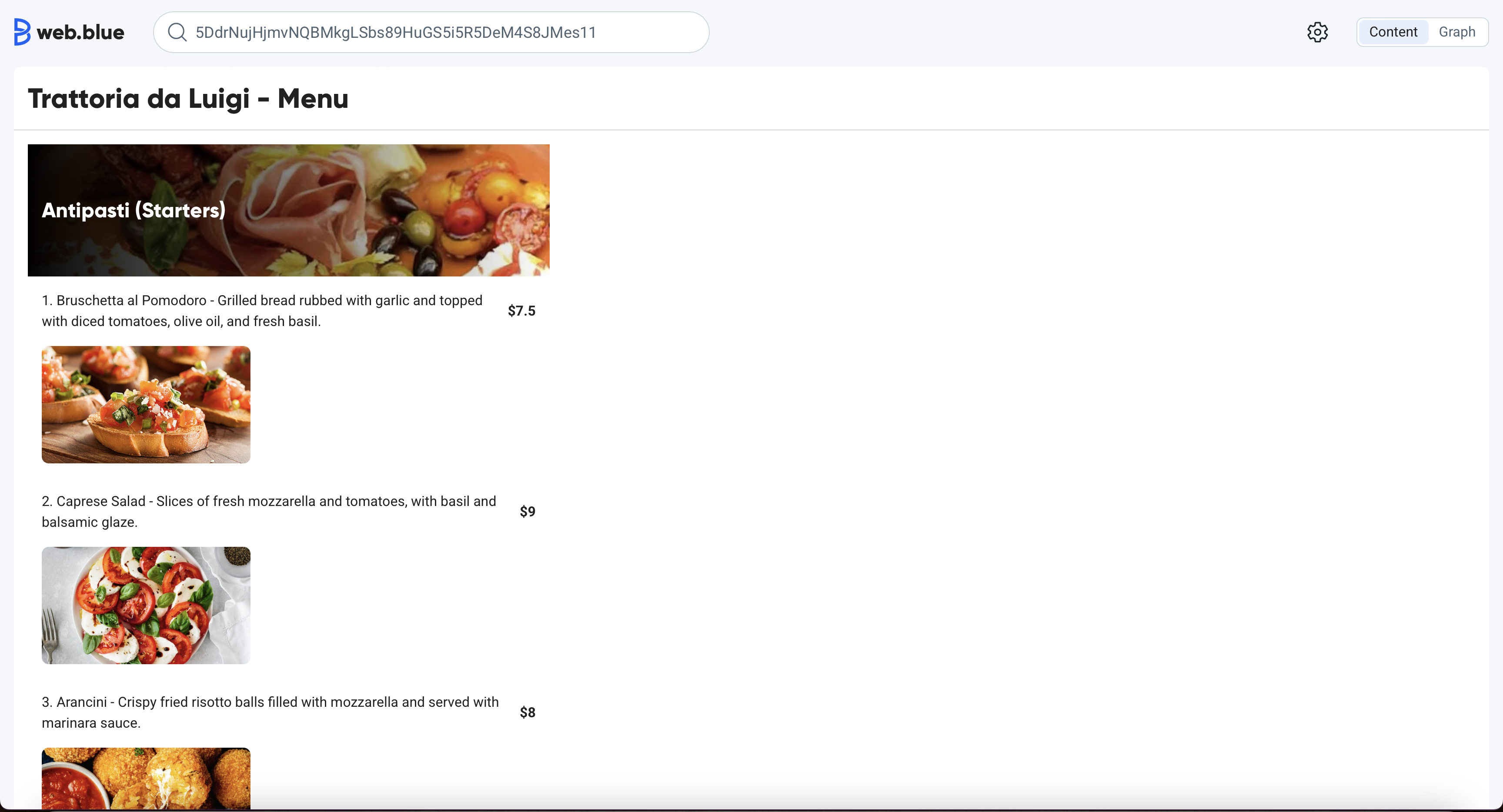

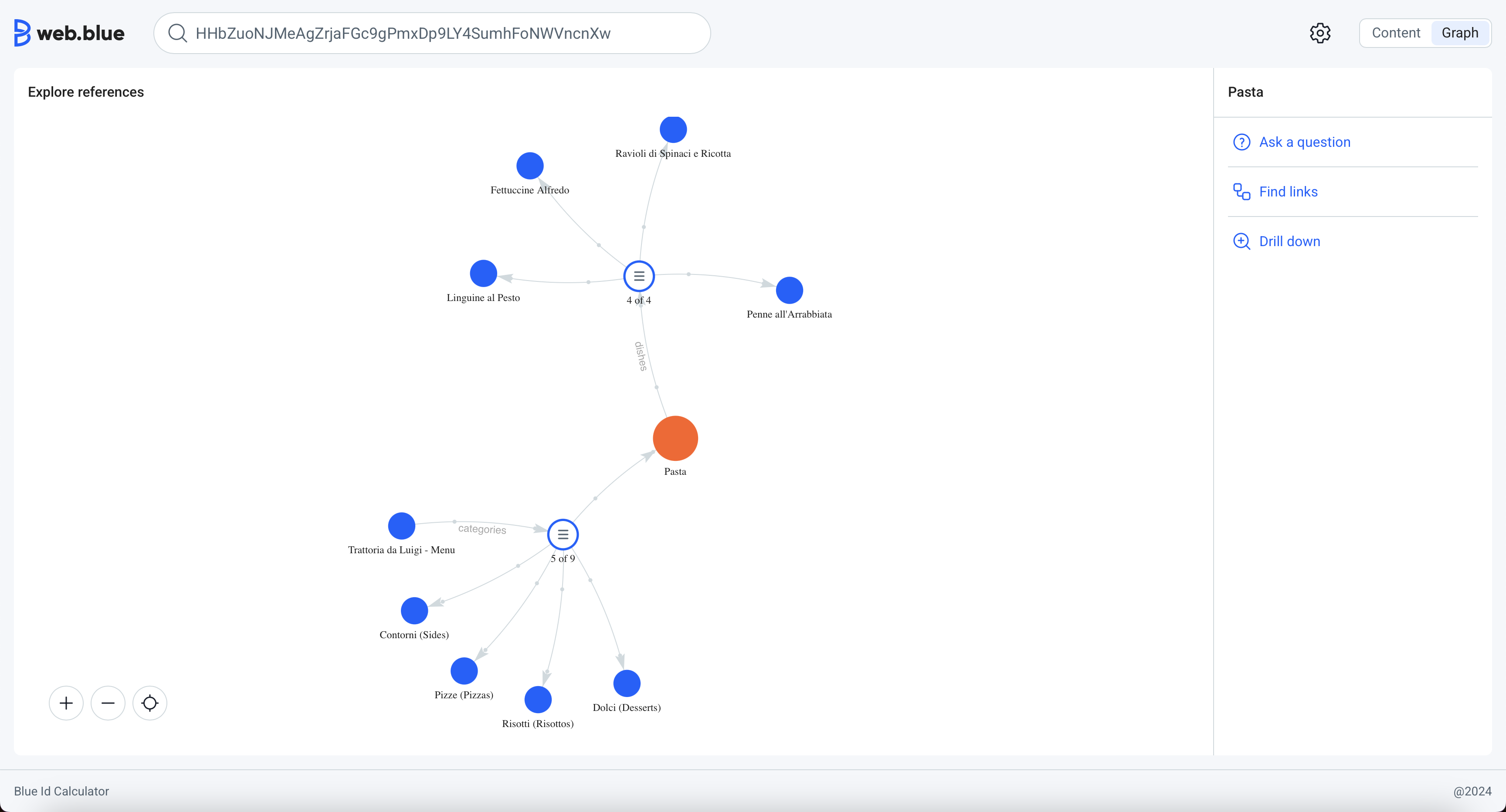

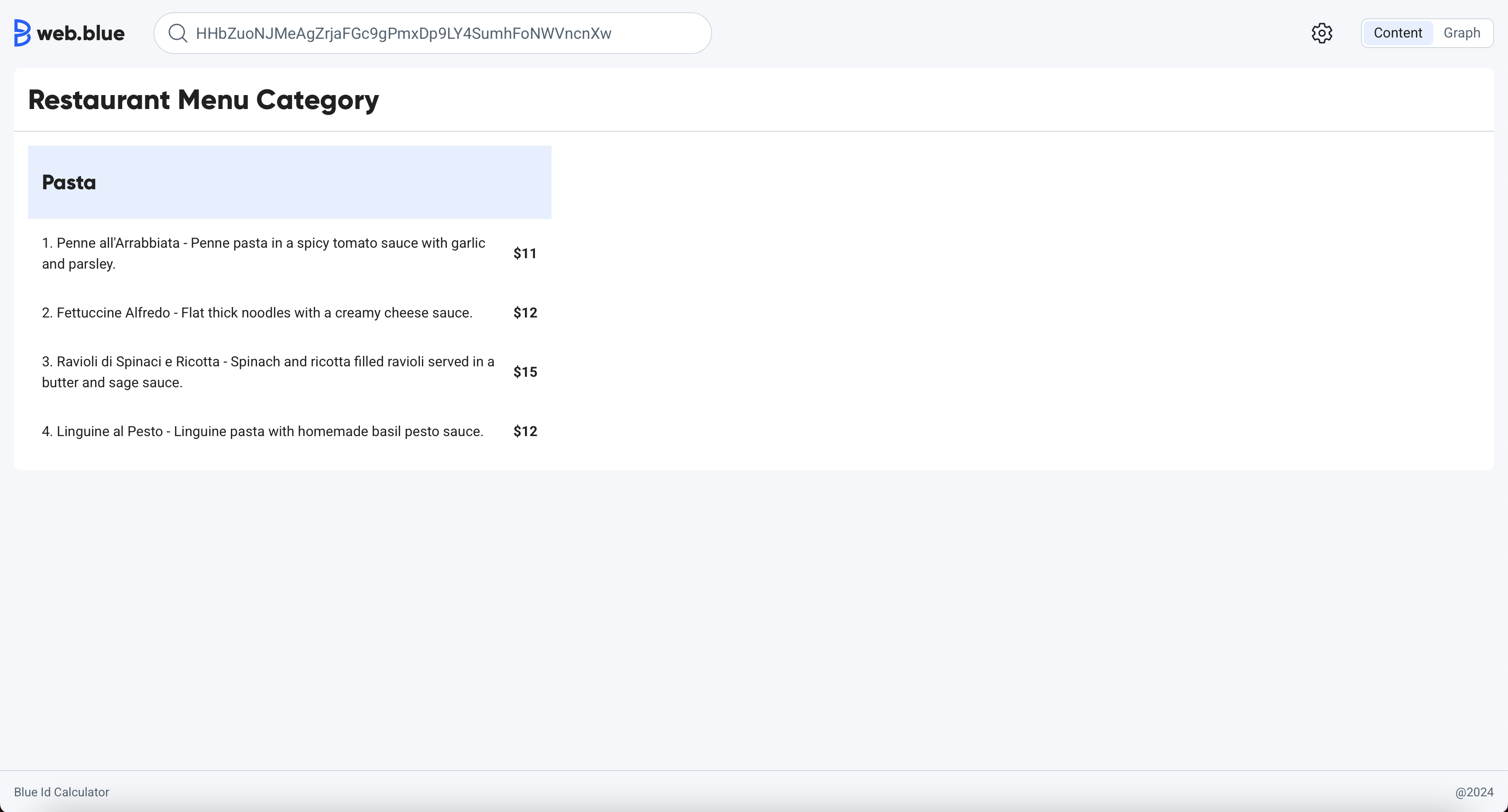

As a document:

Or as an interactive graph that you can traverse:

Web.blue demonstrates how BlueIds enable document exploration without prior knowledge of structure. The restaurant menu example loads gradually as content is retrieved from IPFS, showing how Blue documents can be distributed across decentralized storage systems.

This approach solves a fundamental web problem: content disappearing when URLs change. With BlueIds, you have the fingerprint of content, allowing you to retrieve it from any source that has it. The mapping between BlueIds and IPFS Content Identifiers follows specific transformation rules detailed in the [Java implementation](https://github.com/bluecontract/blue-language-java/blob/master/src/main/java/blue/language/provider/ipfs/BlueIdToCid.java) section.

## Why BlueId Matters

The BlueId approach provides several unique advantages:

1. **Semantic References**: References point to meaning, not just bytes

2. **Universal Vocabulary**: Every piece of content has a unique "word" that identifies it

3. **Location Independence**: Content can move between systems while references remain valid

4. **Natural Deduplication**: Identical content is automatically unified

5. **Verifiable Content**: BlueIds serve as built-in integrity checks

Compare this with other hash-based systems:

| System | What it Hashes | Purpose |

| --------------- | -------------------------------------------- | ------------------------------------------ |

| BlueId | Semantic structure with meaning preservation | Content-addressing with semantic awareness |

| Git Hash | File content + metadata | Version control |

| IPFS CID | Raw bytes | File storage |

| Blockchain Hash | Transaction data | Transaction verification |

The key distinction is that BlueId preserves meaning, not just structure or bytes.

====================

DOCUMENT: The Blue Directive

CATEGORY: language

URL: https://language.blue/docs/language/blue-directive

====================

# The Blue Directive

While Blue enforces a rigorous structure internally, it aims to be as user-friendly as possible for document authors. The `blue` directive bridges this gap by transforming human-friendly documents into proper Blue documents before processing begins.

## Preprocessing with the Blue Directive

The `blue` directive appears at the root level of a document and specifies transformations to apply during preprocessing:

```yaml

blue: Ticket Details v1.0

Ticket Number: ABC-12345

Departure Date: 2023-10-15

Seat: 14A

```

Preprocessing occurs before any other document processing, including BlueId calculation. This ensures that:

1. The original document can be user-friendly and flexible

2. The resulting document strictly follows Blue conventions

3. The BlueId is calculated only after preprocessing completes

The `blue` directive itself is removed during preprocessing, meaning it doesn't affect the document's BlueId or semantic content.

## Common Transformations

Let's explore the most useful transformations that can be included in the `blue` directive:

### Replace Inline Types with BlueIds

This transformation converts simple type names to their full BlueId references:

```yaml

blue:

- type:

blueId: 27B7fuxQCS1VAptiCPc2RMkKoutP5qxkh3uDxZ7dr6Eo

mappings:

Person: 8xYi5Svou5DVawB7CDEGuZitUGFChRYcJUF67bQ3NfXt

Dog: G1pcQx2tq16z5yVqE9TGaCH5uCDAaMZ6uFts7d3NztYo

name: Alice Smith

type: Person

pet:

type: Dog

name: Rex

```

After preprocessing:

```yaml

name: Alice Smith

type:

blueId: 8xYi5Svou5DVawB7CDEGuZitUGFChRYcJUF67bQ3NfXt

pet:

type:

blueId: G1pcQx2tq16z5yVqE9TGaCH5uCDAaMZ6uFts7d3NztYo

name: Rex

```

This transformation (BlueId: `27B7fuxQCS1VAptiCPc2RMkKoutP5qxkh3uDxZ7dr6Eo`) allows you to write documents with simple type names that humans can understand.

### Infer Basic Types For Untyped Values

This transformation automatically determines types for primitive values:

```yaml

blue:

- type:

blueId: FGYuTXwaoSKfZmpTysLTLsb8WzSqf43384rKZDkXhxD4

x: 12

y: true

z: Hello

```

After preprocessing:

```yaml

x:

type: Integer

value: 12

y:

type: Boolean

value: true

z:

type: Text

value: Hello

```

This transformation (BlueId: `FGYuTXwaoSKfZmpTysLTLsb8WzSqf43384rKZDkXhxD4`) eliminates the need to explicitly specify types for every value.

### Convert Attribute Names To Camel Case

This transformation standardizes field names to programming-friendly format:

```yaml

blue:

- type:

blueId: GpMaofZmtLhEQbwkaYd3hm6Es1udEySM6svg8UoN1yEH

Seat No.: 156

Arrival Time: 15:25

```

After preprocessing:

```yaml

seatNo: 156

arrivalTime: 15:25

```

This transformation (BlueId: `GpMaofZmtLhEQbwkaYd3hm6Es1udEySM6svg8UoN1yEH`) makes documents both human-readable and programming-friendly.

### Normalize DateTime Values

This transformation converts date strings to structured DateTime objects:

```yaml

blue:

- type:

blueId: 93nhEGAmviA5Ey8wZ4ZfeHheAbzvxz473rG92zznvTps

datetimePattern: yyyy-MM-dd HH:mm

arrival: 2025-03-27 15:25

```

After preprocessing:

```yaml

arrival:

type: DateTime

year: 2025

month: 3

day: 27

hour: 15

minute: 25

```

This transformation (BlueId: `93nhEGAmviA5Ey8wZ4ZfeHheAbzvxz473rG92zznvTps`) allows human-readable dates while ensuring proper structural representation.

### Map Attribute Names

This transformation translates field names between languages or formats:

```yaml

blue:

- type:

blueId: 27B7fuxQCS1VAptiCPc2RMkKoutP5qxkh3uDxZ7dr6Eo

mappings:

チケット整理番号: ticketSerial

出発日時: departure

座席番号: seatNo

チケット整理番号: HL-923554

出発日時: 2025-03-27 15:25

座席番号: 15

```

After preprocessing:

```yaml

ticketSerial: HL-923554

departure: 2025-03-27 15:25

seatNo: 15

```

This enables content creation in any language while maintaining consistent field names in the processed document.

## Default Blue Directive

Every Blue document has an implicit default `blue` directive if none is specified:

```yaml

blue:

- type:

blueId: 27B7fuxQCS1VAptiCPc2RMkKoutP5qxkh3uDxZ7dr6Eo

mappings:

Text: F92yo19rCcbBoBSpUA5LRxpfDejJDAaP1PRxxbWAraVP

Double: 68ryJtnmui4j5rCZWUnkZ3DChtmEb7Z9F8atn1mBSM3L

Integer: DHmxTkFbXePZHCHCYmQr2dSzcNLcryFVjXVHkdQrrZr8

Boolean: EL6AjrbJsxTWRTPzY8WR8Y2zAMXRbydQj83PcZwuAHbo

List: G8wmfjEqugPEEXByMYWJXiEdbLToPRWNQEekNxrxfQWB

Dictionary: 294NBTj2mFRL3RB4kDRUSckwGg7Kzj6T8CTAFeR1kcSA

- type:

blueId: FGYuTXwaoSKfZmpTysLTLsb8WzSqf43384rKZDkXhxD4

```

This default directive (BlueId: `EWj6aMM5nRAS9hYmrJXpJeL6zNRCVtZJguan9b4ZqJN7`) provides mappings for basic types and enables type inference.

## A Complete Example

Let's see what happens when we apply multiple transformations together:

```yaml

blue: Ticket Details v.1.51

Ticket Serial No.: HL-923554

Departure: 2025-03-27 15:25

Seat No.: 15

```

After all transformations:

```yaml

ticketSerial:

type:

blueId: F92yo19rCcbBoBSpUA5LRxpfDejJDAaP1PRxxbWAraVP

value: HL-923554

departure:

type:

blueId: 4UevQMiUg5BjYe9sX26xf4QHgFoKPmf7JVAM9wk4bmzR

year:

type:

blueId: DHmxTkFbXePZHCHCYmQr2dSzcNLcryFVjXVHkdQrrZr8

value: 2025

month:

type:

blueId: DHmxTkFbXePZHCHCYmQr2dSzcNLcryFVjXVHkdQrrZr8

value: 3

day:

type:

blueId: DHmxTkFbXePZHCHCYmQr2dSzcNLcryFVjXVHkdQrrZr8

value: 27

hour:

type:

blueId: DHmxTkFbXePZHCHCYmQr2dSzcNLcryFVjXVHkdQrrZr8

value: 15

minute:

type:

blueId: DHmxTkFbXePZHCHCYmQr2dSzcNLcryFVjXVHkdQrrZr8

value: 25

seatNo:

type:

blueId: DHmxTkFbXePZHCHCYmQr2dSzcNLcryFVjXVHkdQrrZr8

value: 15

```

The BlueId of this fully processed document is `2YUzCvdTQKB34MutfhsKHEEbdNqJL6FVPNvNcVVvBJDP`.

## The Power of Text Aliases

One of the most powerful aspects of the Blue directive is the ability to use text aliases for transformation sets:

```yaml

blue: Ticket Details v.1.51

Ticket Serial No.: HL-923554

Departure: 2025-03-27 15:25

Seat No.: 15

```

```yaml

blue: Ticket Details v.1.51 JP

チケット整理番号: HL-923554

出発日時: 2025-03-27 15:25

座席番号: 15

```

Despite being written in different languages, both documents preprocess to identical structures with the same BlueId: `2YUzCvdTQKB34MutfhsKHEEbdNqJL6FVPNvNcVVvBJDP`.

To make this work, you register aliases with your Blue processor:

```java

blue.addPreprocessingAliases(Map.of(

"Ticket Details v.1.51", "5VEx7ee9to3Z56eVdkNzBfpHaZU9KD4moYttpS5h6bHt",

"Ticket Details v.1.51 JP", "3wfkqtvZvgiff55ZhrtToK3sDcPHvKKY5DcZw68nor3i"

));

```

## URL References and Security

The `blue` directive can also reference a URL:

```yaml

blue: https://language.blue/simple.blue

name: Alice

type: Person

age: 25

```

For security reasons, URL fetching is disabled by default in most Blue processors. To enable it:

```java

blue.enablePreprocessingDirectivesFetchForUrls();

```

:::caution

Enabling URL fetching creates potential security vulnerabilities. Use registered aliases in production systems.

:::

## Creating Custom Transformations

Anyone can define custom transformations for specialized preprocessing needs. These transformations must be registered with processors that need to understand them.

====================

DOCUMENT: Introduction to Contracts

CATEGORY: contracts

URL: https://language.blue/docs/contracts/introduction

====================

# Introduction to Contracts

Blue contracts are the foundation of dynamic, event-driven behavior in Blue documents. While the Blue language itself provides structure and content addressing, contracts bring documents to life by defining how they respond to events and interact with the world.

## What Are Blue Contracts?

Blue contracts are components within documents that define:

1. **Event channels** that documents listen to

2. **Processing rules** determining how to handle events

3. **State transitions** that update document content

4. **Operations** that documents expose to the outside world

Unlike traditional smart contracts on blockchain platforms, Blue contracts don't require global consensus or a specific runtime environment. Instead, they focus on deterministic behavior that any compliant processor can verify independently.

## Core Philosophy

Blue contracts are built on four key principles:

- **Event-Driven Architecture**: Documents react to events from multiple sources

- **Deterministic Processing**: Given the same inputs, deterministic contracts produce identical results across all processors

- **Channel-First Design**: All interactions begin with channels

- **Composable Behavior**: Contracts combine to create sophisticated applications

## Core Components

### 1. Channels

Channels are the entry points for all events into a document:

```yaml

contracts:

userTimeline:

type: Timeline Channel

account: alice@example.com

```

Every event that affects a document must enter through a channel. Channels can represent user timelines, document changes, REST API endpoints, or external systems.

### 2. Handlers

Handlers process events from channels and execute business logic:

```yaml

contracts:

paymentHandler:

type: Sequential Workflow

channel: paymentEvents

steps:

- name: Verify Amount

# Step details...

```

The most common handler is the Sequential Workflow, which executes a series of steps in response to events.

### 3. Additional Contract Types

Beyond channels and handlers, Blue supports other specialized contracts:

```yaml

contracts:

schemaValidator:

type: Schema Validator

amount:

minimum: 0

maximum: 1000000

```

These contracts serve specific purposes like validating document content, defining operations that others can invoke, or specifying how processors should handle the document. Each contract type focuses on a particular aspect of document behavior.

## Hierarchical Processing

Blue documents can contain nested nodes with their own contracts:

```yaml

name: Payment Agreement

status: pending

btcPayment:

type: BTC Payment

contracts:

btcChannel:

# BTC-specific contracts

contracts:

statusChannel:

# Parent document contracts

```

When processing such documents, the processor evaluates all contracts at all levels of the hierarchy, creating a composed system that respects all defined behaviors.

## Document Processing Mechanics

The Blue processing model follows a single-event pattern:

1. An event arrives at a document through a channel

2. The processor identifies workflows listening to that channel

3. Workflows execute their logic, potentially updating the document

4. If updates occur, Document Update Channels may trigger additional processing

5. Workflows may also emit new events, causing further processing

6. This cycle continues until the document reaches a stable state

7. The processor returns the final updated document and any triggered events

This approach ensures that all compliant processors will reach exactly the same final state, given the same inputs. The deterministic nature of this processing is fundamental to Blue's trust model - allowing independent verification without requiring global consensus.

A critical aspect of this model is maintaining consistent event ordering when processing events from multiple channels, and ensuring that all processors reference the same external reality. For example, when processors handle events from external systems like cryptocurrency transactions or sports results, they must agree on the canonical source of truth. Blue addresses these challenges through channel coordination patterns and specialization mechanisms, which we'll explore in detail in the "Channel-Based Event Model" section.

## Comparison to Traditional Smart Contracts

| Feature | Blue Contracts | Blockchain Smart Contracts |

|---------|---------------|----------------------------|

| Execution Environment | Any Blue processor | Specific blockchain VM |

| Consensus Mechanism | Independent verification | Global network consensus |

| State Storage | Document-centric | Global ledger |

| Integration Approach | Multiple channels | Limited oracle integrations |

| Privacy Model | Flexible, document-specific | Public by default |

| Scripting Capabilities | JavaScript and more | Platform-specific language |

| Reality Model | Multiple verified perspectives | Single global state |

Blue's approach mirrors real-world reasoning - individuals independently observe events, apply shared rules, and reach compatible conclusions without central coordination. Unlike blockchain's enforced global consensus, Blue enables multiple valid perspectives while maintaining verification through clear rules and shared evidence. This provides flexibility without sacrificing trust, allowing systems to model reality more naturally.

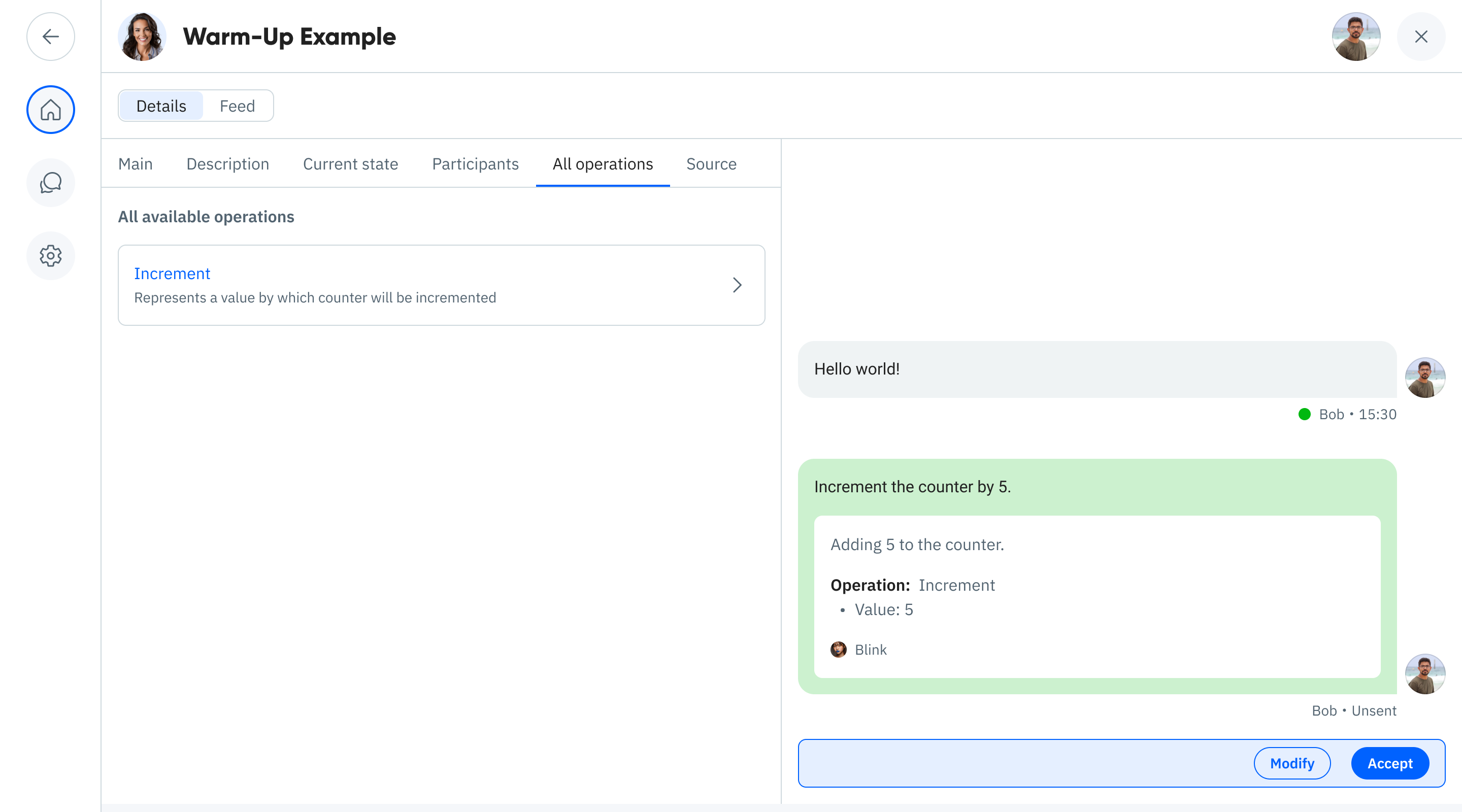

## A Minimal Example

Here's a complete working example of a Blue document with contracts:

```yaml

name: Simple Counter

counter: 0

contracts:

# Define a channel for events

incrementChannel:

type: Timeline Channel

account: user@example.com

# Define a workflow for those events

counterWorkflow:

type: Sequential Workflow

channel: incrementChannel

steps:

- name: Increment Counter

type: Update Document

changeset:

- op: replace

path: /counter

val: ${document('/counter') + 1}

```

This document listens to a timeline and increments a counter each time an event arrives.

## Processing Example

Here's how this document would be processed in Java:

```java

// Parse the document

Blue blue = new Blue(nodeProvider);

Node doc = blue.yamlToNode(yaml);

// Create a processor for the document

DocumentProcessor processor = blue.processor(doc);

// Process an incoming event

ProcessingResult result = processor.processEvent(timelineEvent);

// Get the updated document and triggered events

Node updatedDoc = result.getUpdatedDocument();

List triggeredEvents = result.getTriggeredEvents();

// The counter is now incremented

// updatedDoc will have counter = 1

```

We can also convert the Blue document to a Java object:

```java

// Define a Java class representing the document

@Data

public class SimpleCounter {

private int counter;

}

// Convert the updated document to a Java object

SimpleCounter simpleCounter = blue.nodeToObject(updatedDoc, SimpleCounter.class);

// Access the counter property

assert simpleCounter.getCounter() == 1;

```

This example demonstrates the complete lifecycle of contract processing:

1. An event arrives through the `incrementChannel`

2. The `counterWorkflow` processes the event

3. The document is updated with counter incremented

4. The processor returns the updated document

5. The document can be mapped to Java objects for easier manipulation

Each processor following the Blue contract specification will reach identical results when processing the same events in the same order.

====================

DOCUMENT: Processing Checkpoints

CATEGORY: contracts

URL: https://language.blue/docs/contracts/checkpoints

====================

# Processing Checkpoints

Blue documents evolve as they process events, changing their state—and therefore their BlueId—while maintaining their fundamental identity. Processing checkpoints capture this temporal dimension, serving as formal markers in a document's ongoing journey.

## The Need for State Tracking

Consider a document that processes events from a timeline:

```yaml

name: Simple Counter

counter: 0

contracts:

incrementChannel:

type: Timeline Channel

account: user@example.com

counterWorkflow:

type: Sequential Workflow

channel: incrementChannel

steps:

- name: Update Counter

type: Update Document

changeset:

- op: replace

path: /counter

val: ${document('/counter') + 1}

```

As this document processes events, its state changes:

- After one event: `counter: 1`

- After two events: `counter: 2`

- After three events: `counter: 3`

Each state change creates a new BlueId—a new content hash representing the document's new reality. Without some record of which events created this state, processors would have no way to know where in the event stream this document currently exists.

## The Processing Checkpoint Contract

The Processing Checkpoint contract serves as a formal record of a document's position in time:

```yaml

name: Simple Counter

counter: 2

contracts:

incrementChannel:

type: Timeline Channel

account: user@example.com

counterWorkflow:

type: Sequential Workflow

channel: incrementChannel

steps:

- name: Update Counter

type: Update Document

changeset:

- op: replace

path: /counter

val: ${document('/counter') + 1}

checkpoint:

type: Processing Checkpoint

# Implementation details follow

```

This contract is optional, but when present, processors are expected to automatically update it after processing each event, maintaining an accurate record of the document's temporal position.

## Common Checkpoint Types

The Blue language allows various checkpoint implementations. Here are the most common types:

### Channel Event Checkpoint

This approach records the BlueId of the last processed event from each channel:

```yaml

checkpoint:

type: Channel Event Checkpoint

lastEvents:

incrementChannel:

blueId: 7UEBwTmRMfQ92rGt4vHkzPa8Ypd5KJsLNcA3FV6xDqbn # Last processed event

```

This approach:

- Provides exact tracking of which events were processed

- Works reliably across decentralized processors

- Supports multi-channel documents with independent event streams

For documents with multiple channels, all are tracked independently:

```yaml

checkpoint:

type: Channel Event Checkpoint

lastEvents:

userChannel:

blueId: 7UEBwTmRMfQ92rGt4vHkzPa8Ypd5KJsLNcA3FV6xDqbn

paymentChannel:

blueId: CgJ83PcZwuAHboEL6AjrbJsxTWRTPzY8WR8Y2zAMXRbyd

weatherChannel:

blueId: JXiEdbLToPRWNQEekNxrxfQWBG8wmfjEqugPEEXByMYW

```

### Timestamp Checkpoint

For some scenarios, a timestamp-based checkpoint is used:

```yaml

checkpoint:

type: Timestamp Checkpoint

timestamp: '2023-10-15T14:30:00Z' # Last processed event timestamp

```

Important considerations for timestamp checkpoints:

- The timestamp must be deterministic (typically derived from event timestamps)

- All processors must interpret time identically

- Precision must be sufficient to distinguish between events

Timestamp checkpoints work best in controlled environments where:

- Events have reliable timestamps

- Event ordering by time is consistent

- Time interpretation is standardized across processors

## The River of Time: Document Identity Across States

In traditional databases, identity is imposed externally: a record with ID 12345 remains "the same record" regardless of how its contents change. But in a content-addressed system like Blue, the fundamental question becomes more profound: what makes a document "the same document" when its content—and thus its BlueId—changes over time?

The answer lies in the Processing Checkpoint contract, which creates a formal history of causality. This history binds different document states together, creating a continuous identity through time:

```yaml

# The document in its past (BlueId: a1b2c3...)

name: Simple Counter

counter: 0

contracts:

# Channel and workflow definitions

checkpoint:

type: Channel Event Checkpoint

lastEvents:

incrementChannel: { blueId: null } # No events processed yet

```

```yaml

# The document in its present (BlueId: d4e5f6...)

name: Simple Counter

counter: 2

contracts:

# Channel and workflow definitions

checkpoint:

type: Channel Event Checkpoint

lastEvents:

incrementChannel: { blueId: 8Y2zAMXRbydQj83PcZwuAHboEL6AjrbJsxTWRTPzY }

```

These two documents have different BlueIds because their content has changed, yet they represent the same logical entity at different points in its lifecycle. The checkpoint creates a causal connection between them, showing how one evolved into the other.

This is more than just a technical feature—it's a formal model of identity through change. Like the philosophical Ship of Theseus which remains "the same ship" even as all its planks are replaced over time, a Blue document remains "the same document" through its processing history despite having completely different content and BlueId.

## The Three Temporal Dimensions

The Processing Checkpoint gives every document three temporal dimensions:

### 1. Verifiable Past

The checkpoint's record of processed events creates a verifiable history. Any processor can:

- Validate that the document legitimately evolved from a known earlier state

- Reproduce the document's current state by replaying events

- Verify the integrity of document processing

### 2. Known Present

The checkpoint itself represents the document's "now"—its current position in the event timeline. This gives processors:

- A clear understanding of what state the document represents

- Confidence in which events have been incorporated

- A foundation for consistent behavior across systems

### 3. Processable Future

By knowing exactly which events have been processed, the checkpoint enables:

- Precise continuation of processing from the current state

- Clear criteria for what constitutes a "new" event

- Orderly evolution to future states

## Automatic Checkpoint Management

Blue processors update checkpoints automatically after each event is processed:

```java

// Process an event

ProcessingResult result = processor.processEvent(event);

Node updatedDoc = result.getUpdatedDocument();

// The checkpoint is automatically updated

Node checkpoint = updatedDoc.getAsNode("/contracts/checkpoint/lastEvents/incrementChannel");

String lastEventId = checkpoint.getBlueId();

assert lastEventId.equals(event.getBlueId());

```

====================

DOCUMENT: Events from Multiple Sources

CATEGORY: contracts

URL: https://language.blue/docs/contracts/coordination

====================

# Events from Multiple Sources

Blue documents often receive events from multiple channels. While each channel maintains its own ordering, a key challenge arises: how should events from different channels be sequenced relative to each other? This page explains how Blue handles this challenge through channel coordination contracts.

## The Multi-Channel Counter Problem

Let's start with a concrete example: a document that counts events from both Alice and Bob:

```yaml

name: Alice and Bob Counter

counterAlice: 0

counterBob: 0

contracts:

aliceChannel:

type: Timeline Channel

account: alice@example.com

bobChannel:

type: Timeline Channel

account: bob@example.com

aliceWorkflow:

type: Sequential Workflow

channel: aliceChannel

steps:

- name: Update Alice Counter

type: Update Document

changeset:

- op: replace

path: /counterAlice

val: ${document('/counterAlice') + 1}

bobWorkflow:

type: Sequential Workflow